Install and run:

pip install thermal-face-alignmentimport cv2

from tfan import ThermalLandmarks

# Read a thermal image (grayscale)

image = cv2.imread("thermal.png", cv2.IMREAD_GRAYSCALE)

# Initialize landmarker (downloads weights on first use)

landmarker = ThermalLandmarks(device="cpu", n_landmarks=70)

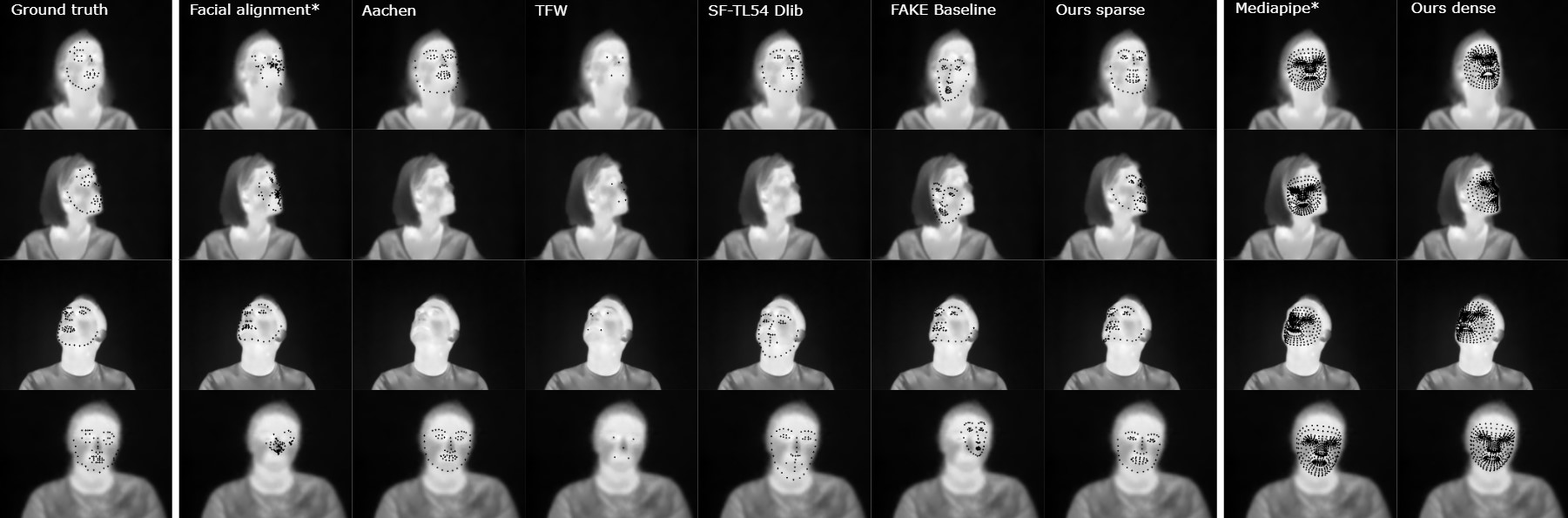

landmarks, confidences = landmarker.process(image) Predicted 70 and 478 point landmarks on an example from the TFW Dataset.

Predicted 70 and 478 point landmarks on an example from the TFW Dataset.

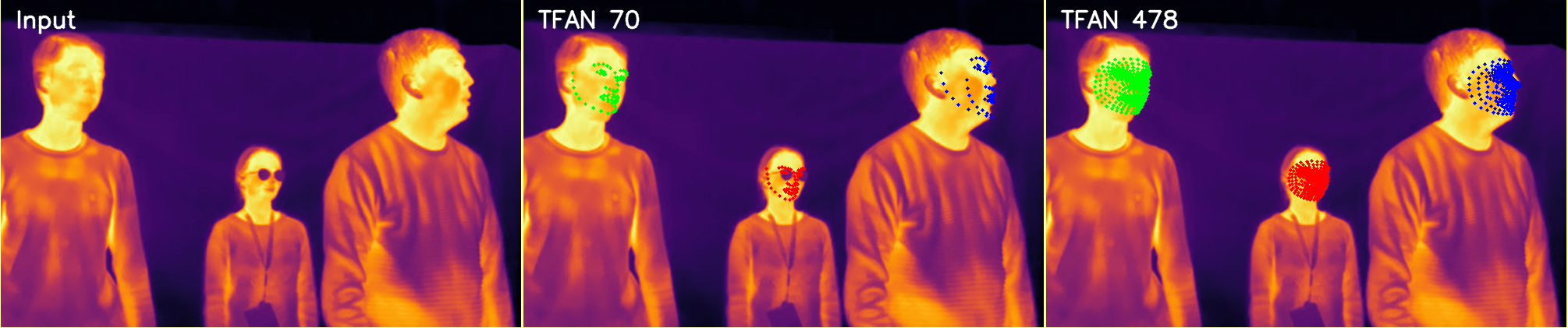

Predicted 70 and 478 point landmarks on an example from the BU-TIV Benchmark.

Predicted 70 and 478 point landmarks on an example from the BU-TIV Benchmark.

The ThermalLandmarks wraps a landmarker trained on T-FAKE either with a tracker-free sliding window selecting the face with lowest uncertainty or via a bbox computed with a smaller model.

Please note that we trained our network with temperature value range of 20°C to 40°C. While our implementation performs an automatic rescaling, please make sure that you adapt our landmarker options based on the input pixel values.

ThermalLandmarks(

model_path=None,

device="cpu",

gpus=[0, 1],

eta=0.75,

max_lvl=0,

stride=100,

n_landmarks=478, # 478 or 70 point landmarks are supported

normalize=True,

)-

model_path(strorPath, optional) Path to a pretrained DMMv2 model (state_dict). If omitted, pretrained weights matchingn_landmarksare downloaded automatically. -

device("cpu"or"cuda", default"cpu") Torch device used for inference. When using"cuda", the model may be wrapped inDataParallel. -

gpus(list[int], default[0, 1]) GPU device IDs used whendevice="cuda". -

n_landmarks(int, default478) Number of facial landmarks predicted per face. Choices:70— sparse landmarks following the Face Synthetic convention of (Wood et al., 2021).478— dense landmarks following the MediaPipe face mesh convention.

-

normalize(bool, defaultTrue) Apply ImageNet normalization to cropped face patches before inference. Assumes inputs are scaled to[0, 255]. -

eta(float, default0.75) Pyramid scale factor used in sliding-window mode. -

max_lvl(int, default0) Maximum pyramid level for multi-scale sliding-window inference. -

stride(int, default100) Pixel stride used during sliding-window scanning.

landmarks, confidences = landmarker.process(

image,

sliding_window=False,

multi=False,

mode="auto",

upsample_factor=1.0,

nms_iou_threshold=0.5,

uncertainty_factor=None,

top_k=None,

)-

image(numpy.ndarray) Input frame:H×W: thermal or grayscale imageH×W×3: RGB/BGR image

-

mode("auto" | "temperature" | "pixel", default"auto") Controls how numeric values are interpreted:"temperature": 2D thermal image in °C"pixel": pixel intensities in[0, 255]or[0, 1]"auto": inferred from dtype and value range

-

multi(bool, defaultFalse) IfTrue, return landmarks for all detected faces. IfFalse, only the first face is returned. -

sliding_window(bool, defaultFalse) Enable multi-scale sliding-window inference. This path does not run the YOLO/TFW face tracker. Withmulti=True, overlapping candidates are merged with NMS. Note:stride > 112is suboptimal formulti=True. -

upsample_factor(float, default1.0) Optionally upsample the input before inference. Values larger than1.0can help with very small faces. Returned landmarks stay in the original image coordinates. -

nms_iou_threshold(float, default0.5) IoU threshold used by sliding-window NMS whensliding_window=Trueandmulti=True. -

uncertainty_factor(float, optional) Optional post-NMS pruning factor for sliding-window multi-face inference. Keeps detections whose mean uncertainty is at mostbest_mean_uncertainty * uncertainty_factor. -

top_k(int, optional) Optional maximum number of sliding-window detections to keep after NMS and uncertainty filtering.

-

landmarksPixel coordinates in the original image:- List of

(n_landmarks, 2)arrays (multi-face) - Single

(n_landmarks, 2)array (sliding window)

- List of

-

confidencesPer-landmark uncertainty scores of shape(n_landmarks,)

This landmarker is an implementation of our work presented in our CVPR paper on thermal landmarking (Main GitHub). We employed the TFW face detector for our inital face detection as it performed very well in our benchmark. Please note that this library is meant for research purposes only.

We trained our landmarker on our custom-made T-FAKE dataset consisting of synthetic thermal images. To download the original color images, sparse annotations, and segmentation masks for the dataset, please use the links in the FaceSynthetics repository.

Our dataset has been generated for a warm and for a cold condition. Each dataset can be downloaded separately as

- A small sample with 100 images from here warm and here cold

- A medium sample with 1,000 images from here warm and here cold

- The full dataset with 100,000 images from here warm and here cold

- The dense annotations are available from here

The models for the thermalization as well as the landmarkers can be downloaded from here.

Our landmarking methods and the training dataset are licensed under the Attribution-NonCommercial-ShareAlike 4.0 International license as it is derived from the FaceSynthetics dataset.

If you use this code for your own work, please cite our paper:

P. Flotho, M. Piening, A. Kukleva and G. Steidl, “T-FAKE: Synthesizing Thermal Images for Facial Landmarking,” Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR), 2025. CVF Open Access

BibTeX entry

@InProceedings{tfake2025_CVPR,

author = {Flotho, Philipp and Piening, Moritz and Kukleva, Anna and Steidl, Gabriele},

title = {T-FAKE: Synthesizing Thermal Images for Facial Landmarking},

booktitle = {Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR)},

month = {June},

year = {2025},

pages = {26356-26366}

}

The thermal face bounding box detection in this repo uses the TFW landmarker model, please additionally cite:

Kuzdeuov, A., Aubakirova, D., Koishigarina, D., & Varol, H. A. (2022). TFW: Annotated Thermal Faces in the Wild Dataset. IEEE Transactions on Information Forensics and Security, 17, 2084–2094. https://doi.org/10.1109/TIFS.2022.3177949

@article{9781417,

author={Kuzdeuov, Askat and Aubakirova, Dana and Koishigarina, Darina and Varol, Huseyin Atakan},

journal={IEEE Transactions on Information Forensics and Security},

title={TFW: Annotated Thermal Faces in the Wild Dataset},

year={2022},

volume={17},

pages={2084-2094},

doi={10.1109/TIFS.2022.3177949}

}