This repository provides the official implementation of the paper:

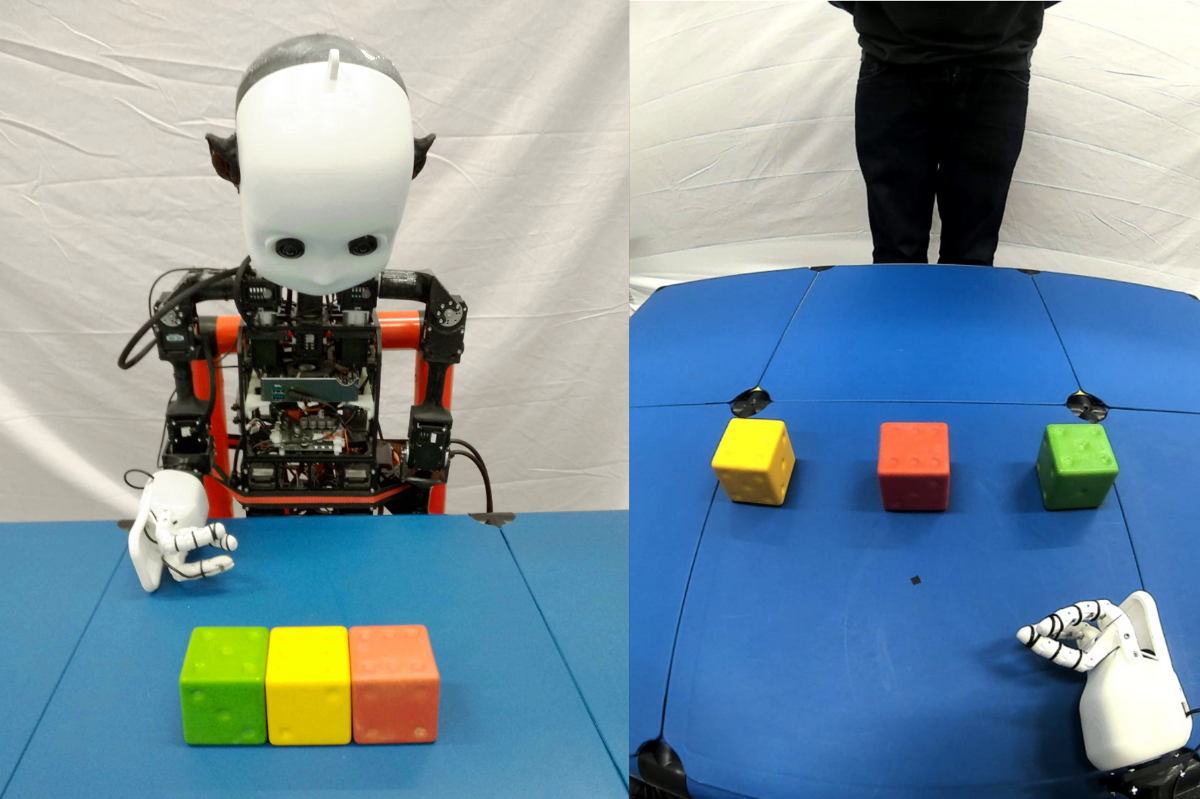

Solving Visual Object Ambiguities when Pointing: An Unsupervised Learning Approach (Neural Computing and Applications 2020)

*‡Doreen Jirak, *†David Biertimpel, *Matthias Kerzel and *Stefan Wermter

*University of Hamburg, ‡Istituto Italiano di Tecnologia, †University of Amsterdam

pre-print : https://arxiv.org/abs/1912.06449

Note: Research was conducted at University of Hamburg

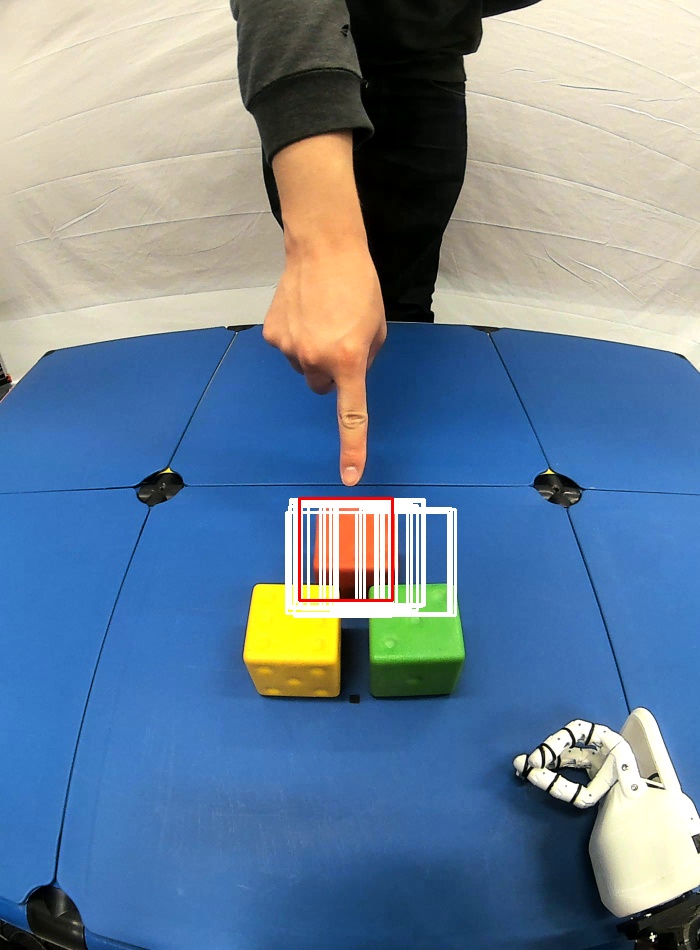

Whenever we are addressing a specific object or refer to a certain spatial location, we are using referential or deictic gestures usually accompanied by some verbal description. Particularly, pointing gestures are necessary to dissolve ambiguities in a scene and they are of crucial importance when verbal communication may fail due to environmental conditions or when two persons simply do not speak the same language. With the currently increasing advances of humanoid robots and their future integration in domestic domains, the development of gesture interfaces complementing human–robot interaction scenarios is of substantial interest. The implementation of an intuitive gesture scenario is still challenging because both the pointing intention and the corresponding object have to be correctly recognized in real time. The demand increases when considering pointing gestures in a cluttered environment, as is the case in households. Also, humans perform pointing in many different ways and those variations have to be captured. Research in this field often proposes a set of geometrical computations which do not scale well with the number of gestures and objects and use specific markers or a predefined set of pointing directions. In this paper, we propose an unsupervised learning approach to model the distribution of pointing gestures using a growing-when-required (GWR) network. We introduce an interaction scenario with a humanoid robot and define the so-called ambiguity classes. Our implementation for the hand and object detection is independent of any markers or skeleton models; thus, it can be easily reproduced. Our evaluation comparing a baseline computer vision approach with our GWR model shows that the pointing-object association is well learned even in cases of ambiguities resulting from close object proximity.

The demo.py comes with a few parameters:

--gwr-model Path to the GWR model. Not used when using pointing-array for prediction.

--skin-model Path to the skin-color model used for hand detection.

--demo-video Path to the demo video.

--use-pointing-array If set, the pointing array approach is used. By default, the GWR network is used.

The default parameters put in place allow running python demo.py for the GWR- and python demo.py --use-pointing-array for the pointing-array based approach. A demo run with all parameters specified looks as follows:

python demo.py --gwr-model "resources/gwr_models/model_demo" \

--skin-model "resources/skin_color_segmentation/saved_histograms/skin_probabilities_crcb.npy" \

--demo-video "resources/test_videos/amb1_o3_r1_m.webm" \

--use-pointing-array

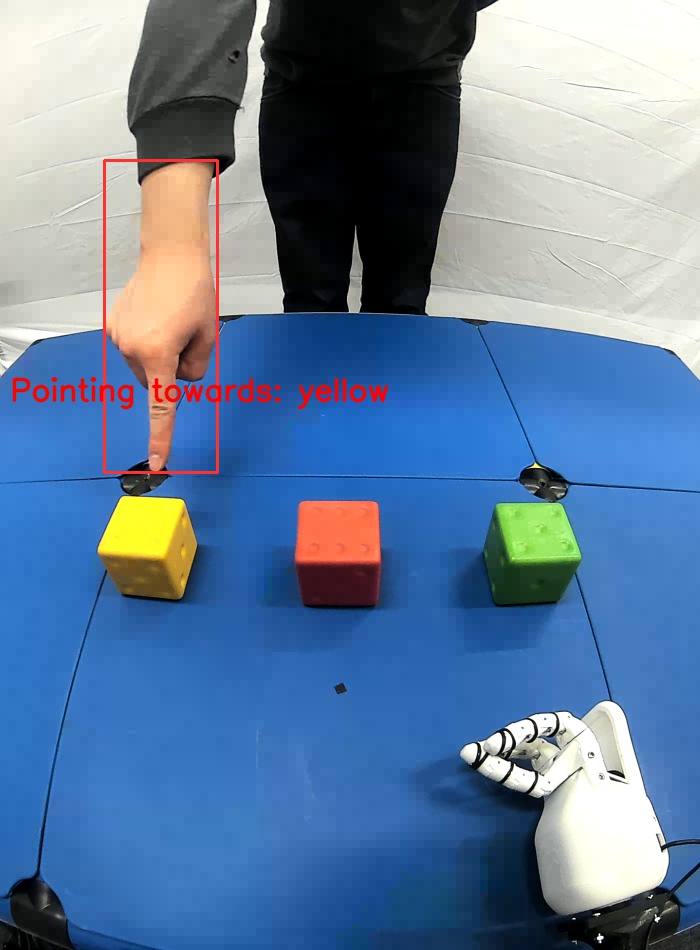

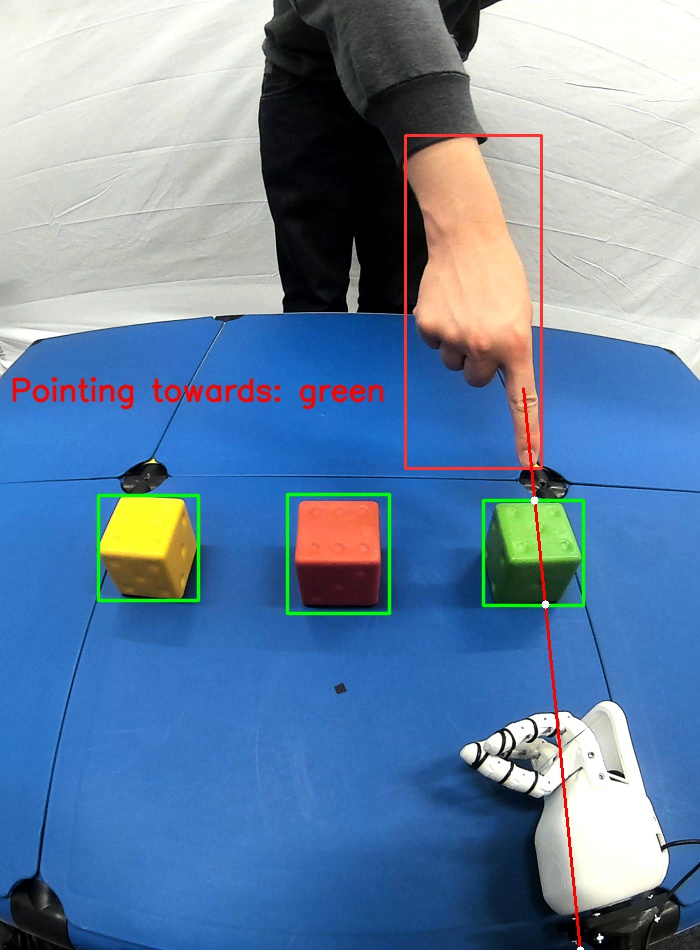

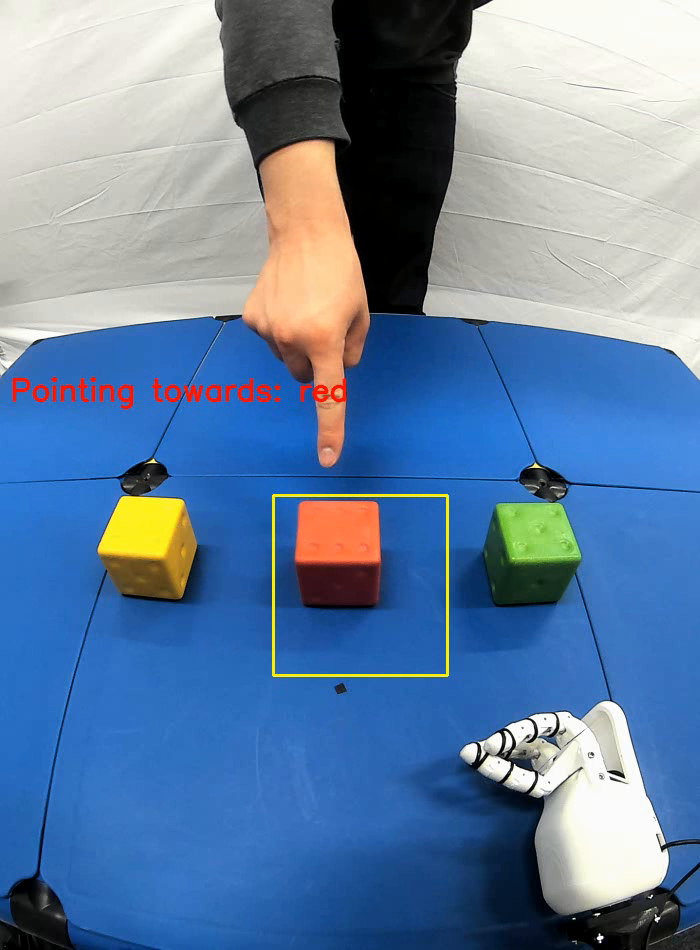

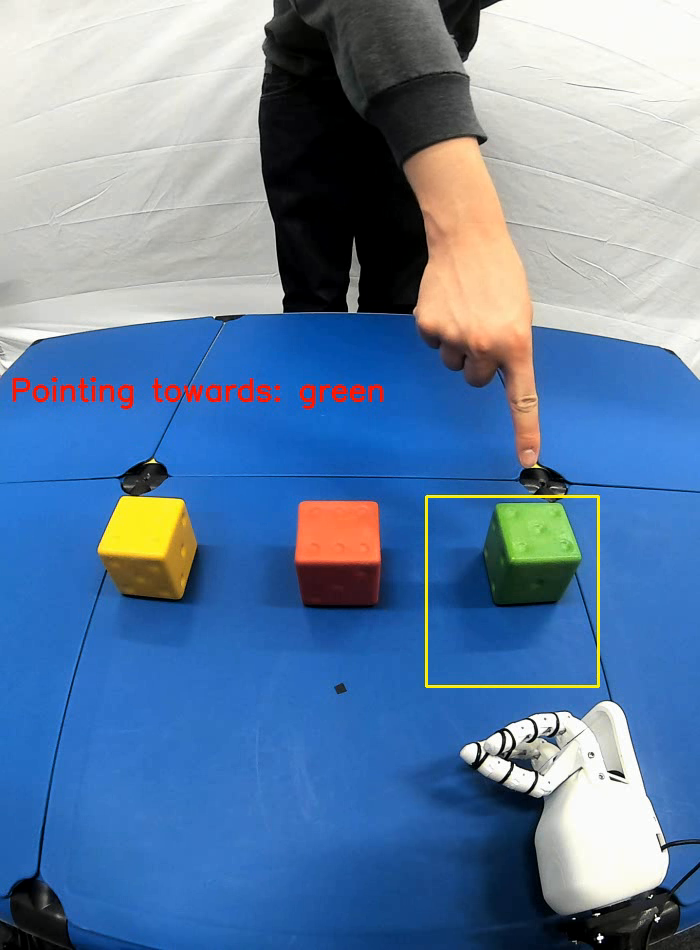

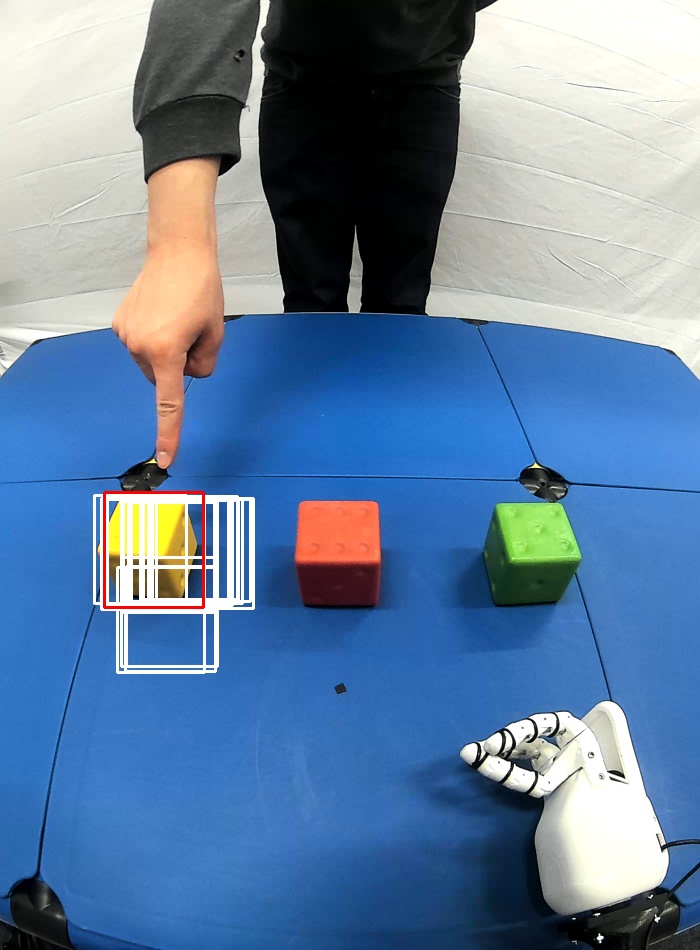

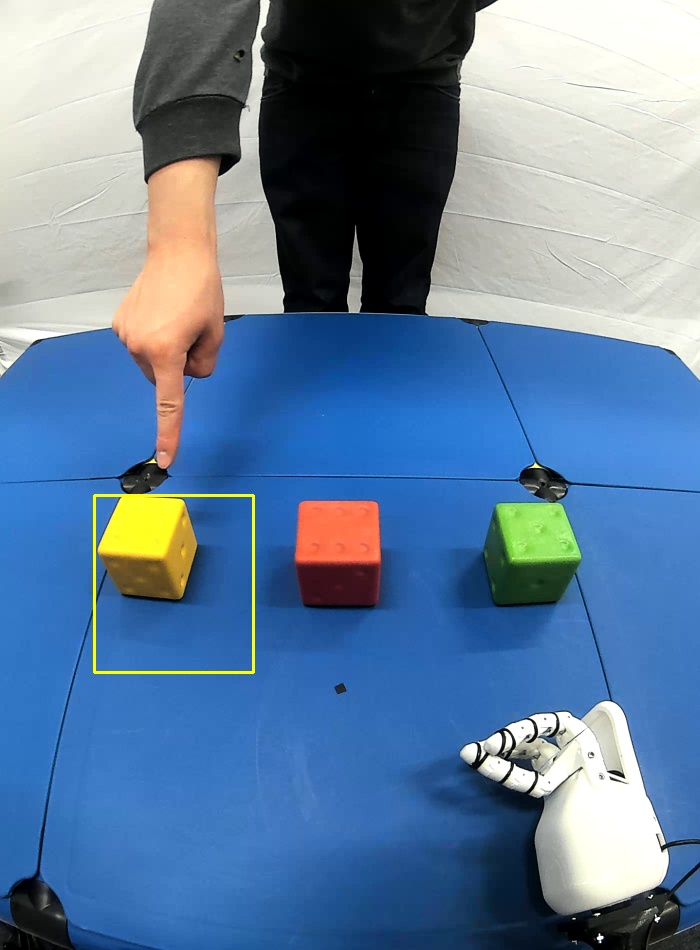

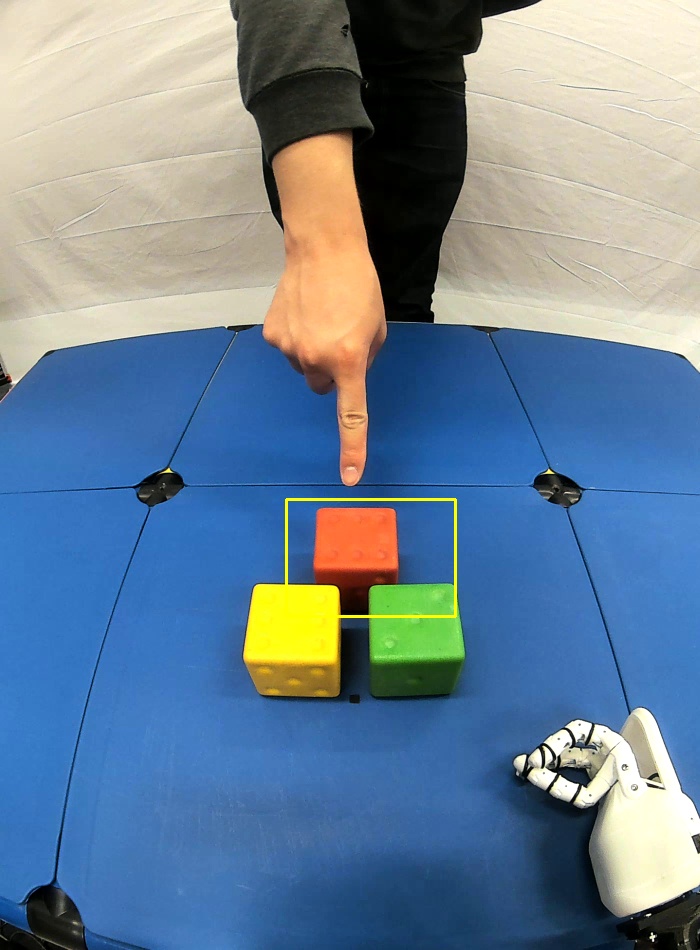

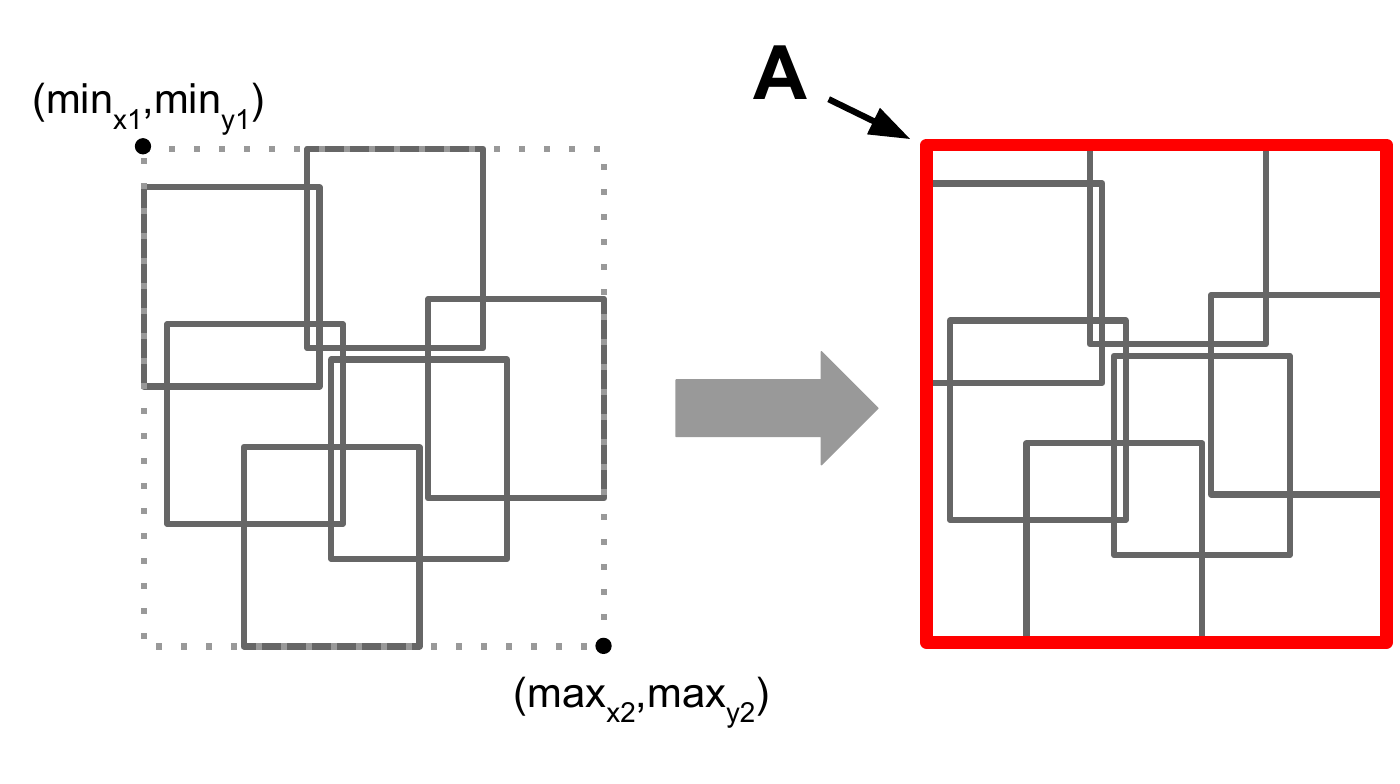

Below an impression how the run after executing the commands should look like (left: GWR based pointing, right: pointing with a poining-array).

The deictic gesture recognition is entirely based on NumPy, OpenCV and occasional SciPy functions. The full dependencies can be viewed in the environment.yml.

For citing our paper please use the following BibTeX entry:

@Article{Jirak2020,

author={Jirak, Doreen

and Biertimpel, David

and Kerzel, Matthias

and Wermter, Stefan},

title={Solving visual object ambiguities when pointing: an unsupervised learning approach},

journal={Neural Computing and Applications},

year={2020},

month={Jun},

day={30},

issn={1433-3058},

doi={10.1007/s00521-020-05109-w},

url={https://doi.org/10.1007/s00521-020-05109-w}

}Special thanks to German Parisi for all the fruitful discussions about the GWR and its possible applications for gesture recognition.

Note: The code this work is based on was developed during my bachelor thesis at the University of Hamburg (2018).