A Kotlin server application that analyzes GitHub repositories using AI models through the Model Context Protocol (MCP).

- Clone and analyze GitHub repositories

- Extract code structure and relationships

- Process code using Model Context Protocol

- Generate detailed insights and summaries

- Multiple server modes (stdio, SSE)

- JDK 23 or higher

- Kotlin 2.2.x

- Gradle 9.0 or higher

- Ollama 3.2 or higher (for model API)

- MCP Inspector (for model context protocol)

- Clone this repository

- Build the project using Gradle:

./gradlew build- Start Ollama server:

ollama run llama3.2:latest- Start the MCP Inspector:

export DANGEROUSLY_OMIT_AUTH=true; npx @modelcontextprotocol/inspector@0.16.2- You can access the MCP Inspector at

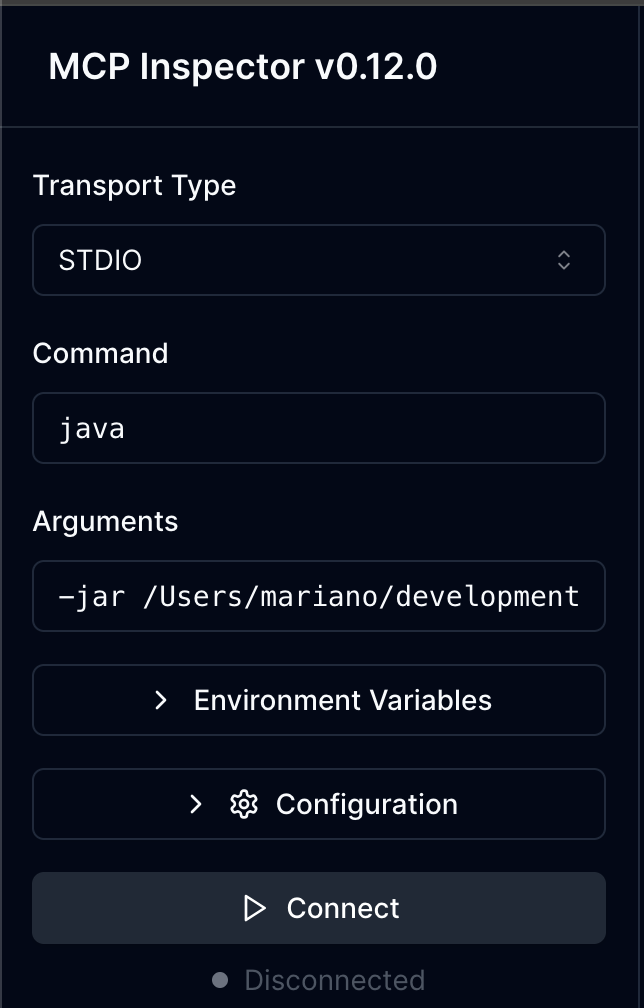

http://127.0.0.1:6274/and configure theArgumentsto start the server:

Use the following arguments:

-jar ~/mcp-github-code-analyzer/build/libs/mcp-github-code-analyzer-0.1.0-SNAPSHOT.jar --stdio-

Click

Connectto start the MCP Server. -

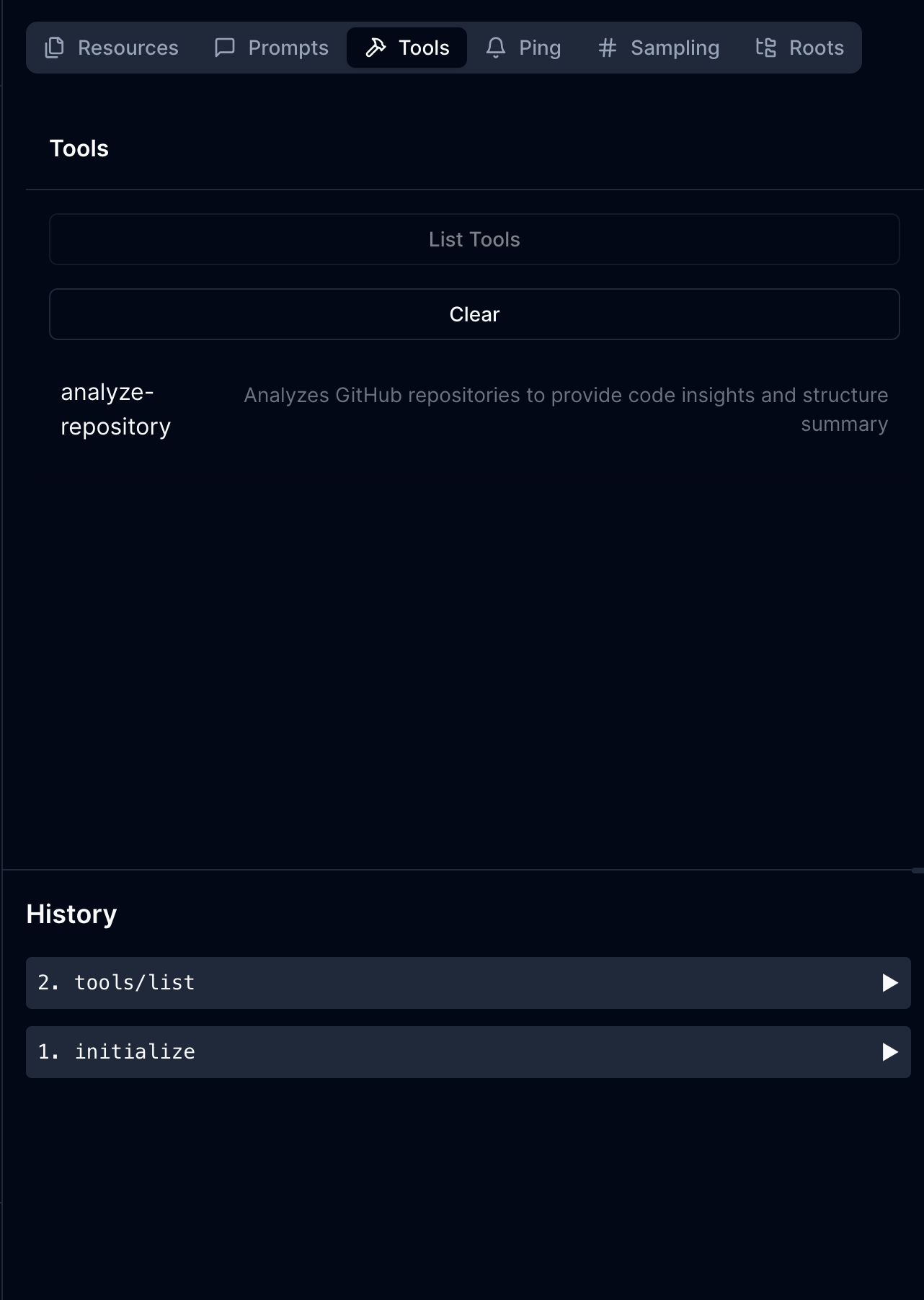

Then you can click the tab

Toolsto discover the available tools. The Toolanalyze-repositoryshould be listed and ready to be used. Click on theanalyze-repositorytool to see its details and parameters:

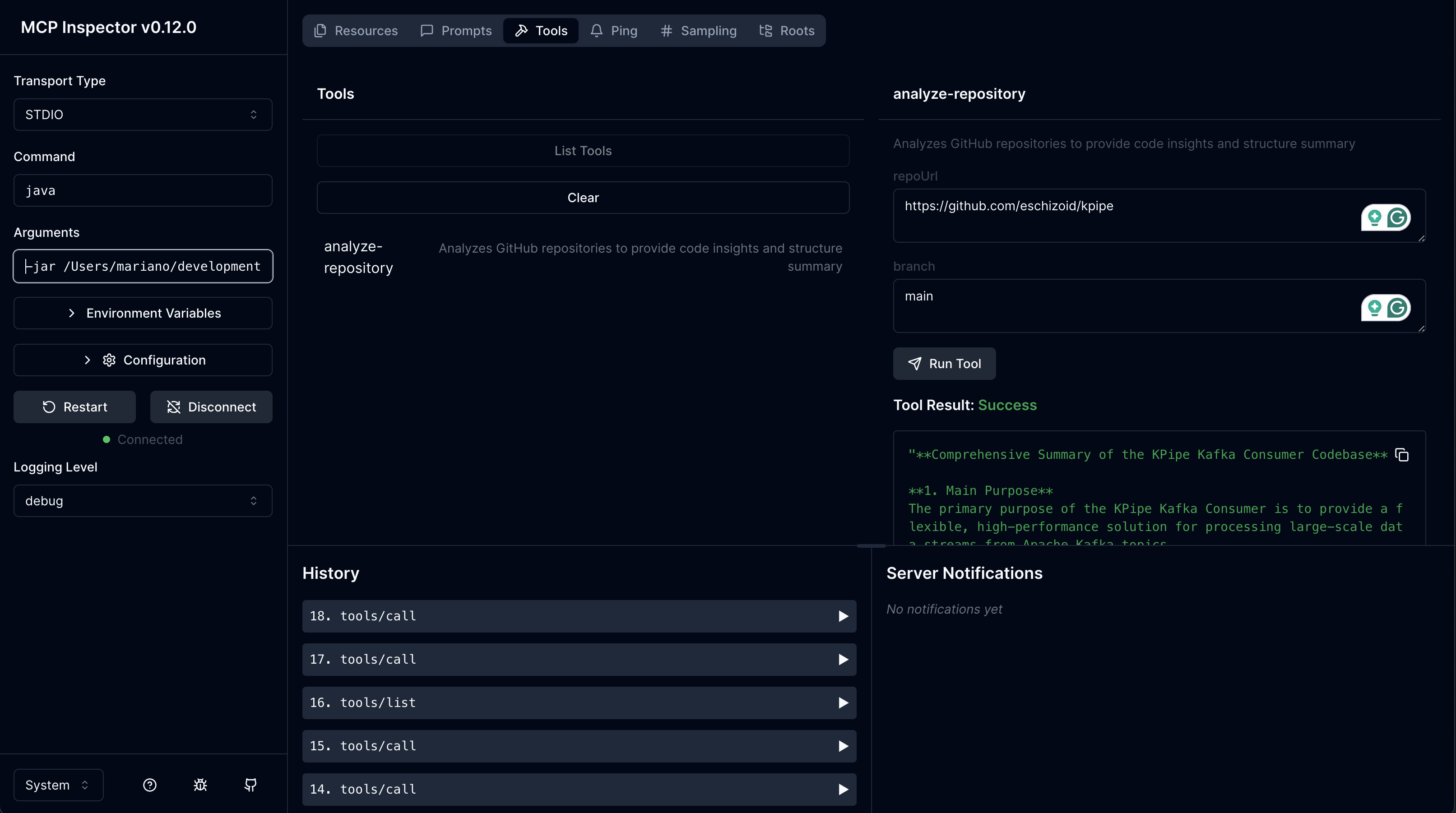

- Finally, capture the

repoUrlandbranchparameters and clickRun Toolto start the analysis:

The application uses environment variables for configuration:

SERVER_PORT: The port for the server (default: 3001)GITHUB_TOKEN: GitHub token for API access (optional)WORKING_DIRECTORY: Directory for cloning repositories (default: system temp + "/mcp-code-analysis")MODEL_API_URL: URL for the model API (default: "http://localhost:11434/api")MODEL_API_KEY: API key for the model service (optional)MODEL_NAME: Name of the model to use (default: "llama3.2")

The server supports multiple modes:

# Default: Run as SSE server with Ktor plugin on port 3001

./gradlew run

# Run with standard input/output

./gradlew run --args="--stdio"

# Run as SSE server with Ktor plugin on custom port

./gradlew run --args="--sse-server-ktor 3002"

# Run as SSE server with plain configuration

./gradlew run --args="--sse-server 3002"

# With custom environment variables:

SERVER_PORT=3002 MODEL_NAME=mistral ./gradlew runThis server implements the Model Context Protocol (MCP) and provides the following tool:

analyze-repositoryAnalyzes GitHub repositories to provide code insights and structure summary.

Required parameters:

repoUrl: GitHub repository URL (e.g., https://github.com/owner/repo)

Optional parameters:

branch: Branch to analyze (default: main)

Main.kt: Application entry pointconfig/: Configuration classesAppConfig.kt: Immutable configuration data class

server/: MCP server implementationMcp.kt: Functional MCP server with multiple run modes

processor/: MCP server implementationCodeAnalyzer.kt: Analyzes code structureCodeContentProcessor.kt: Processes code files

service/: Core services for repository analysisGitService.kt: Handles repository cloningModelContextService.kt: Generates insights using AI modelsRepositoryAnalysisService.kt: Coordinates the analysis process

All services are implemented as functional data classes with explicit dependency injection.

This project is open source and available under the MIT License.