" \

+```

+

+**MinIO Authentication**

+```

+--set minio.auth.rootUser=texera_minio \

+--set minio.auth.rootPassword=password \

+```

+

+**PostgreSQL Authentication (username is always postgres)**

+```

+--set postgresql.auth.postgresPassword=root_password \

+```

+

+> 💡 Note: If you change the PostgreSQL password, you also need to change the following and add it to the install command:

+--set lakefs.secrets.databaseConnectionString="postgres://postgres:root_password@texera-postgresql:5432/texera_lakefs?sslmode=disable" \

+

+**LakeFS Authentication**

+```

+--set lakefs.auth.username=texera-admin \

+--set lakefs.auth.accessKey=AKIAIOSFOLKFSSAMPLES \

+--set lakefs.auth.secretKey=wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY \

+--set lakefs.secrets.authEncryptSecretKey=random_string_for_lakefs \

+```

+

+### Allocating Resources

+If your cluster has more available resources, you can allocate additional CPU, memory, and disks to Texera to improve the performance.

+

+**Postgres**

+

+To allocate more CPU, Memory and disk to Postgres, do:

+```

+--set postgresql.primary.resources.requests.cpu=4 \

+--set postgresql.primary.resources.requests.memory=4Gi \

+--set postgresql.primary.persistence.size=50Gi \

+```

+

+**MinIO**

+

+To increase the storage for user's input dataset, do:

+```

+--set minio.persistence.size=100Gi

+```

+

+**Computing Unit**

+

+To customize options for the computing unit, do:

+```bash

+# MAX_NUM_OF_RUNNING_COMPUTING_UNITS_PER_USER

+--set texeraEnvVars[5].value="2" \

+# CPU_OPTION_FOR_COMPUTING_UNIT

+--set texeraEnvVars[6].value="1,2,4" \

+# MEMORY_OPTION_FOR_COMPUTING_UNIT

+--set texeraEnvVars[7].value="2Gi,4Gi,16Gi" \

+# GPU_LIMIT_OPTIONS

+--set texeraEnvVars[8].value="0,1" \ # to allow 0 or 1 GPU resource to be allocated

+```

+

+### Adjusting Number of Pods

+Scale out individual services for high availability or increased performance:

+

+```

+--set webserver.numOfPods=2 \

+--set workflowCompilingService.numOfPods=2 \

+--set pythonLanguageServer.replicaCount=2 \

+```

+

+

+### Retaining User Data

+By default, all user data stored by Texera will be deleted when the cluster deployment is removed. Since user data is valuable, you can preserve all datasets and files even after uninstalling the cluster by setting:

+```

+--set persistence.removeAfterUninstall=false

+```

diff --git a/texera.wiki/Deploying-Texera-on-Google-Cloud-Platform-(GCP).md b/texera.wiki/Deploying-Texera-on-Google-Cloud-Platform-(GCP).md

new file mode 100644

index 00000000000..43c1cd8bb70

--- /dev/null

+++ b/texera.wiki/Deploying-Texera-on-Google-Cloud-Platform-(GCP).md

@@ -0,0 +1,229 @@

+## Prerequisites: Check your quota

+

+Your GCP account should be able to allocate at least 20 vCPUs and 1 TB of SSD. To check your quota, go to the [GCP Quotas](https://console.cloud.google.com/iam-admin/quotas?referrer=search&pageState=(%22allQuotasTable%22:(%22f%22:%22%255B%257B_22k_22_3A_22Name_22_2C_22t_22_3A10_2C_22v_22_3A_22_5C_22CPUs_5C_22_22_2C_22s_22_3Atrue_2C_22i_22_3A_22displayName_22%257D_2C%257B_22k_22_3A_22_22_2C_22t_22_3A10_2C_22v_22_3A_22_5C_22OR_5C_22_22_2C_22o_22_3Atrue_2C_22s_22_3Atrue%257D_2C%257B_22k_22_3A_22Name_22_2C_22t_22_3A10_2C_22v_22_3A_22_5C_22Persistent%2520Disk%2520SSD%2520%2528GB%2529_5C_22_22_2C_22s_22_3Atrue_2C_22i_22_3A_22displayName_22%257D_2C%257B_22k_22_3A_22Dimensions%2520%2528e.g.%2520location%2529_22_2C_22t_22_3A10_2C_22v_22_3A_22_5C_22region_3Aus-central1_5C_22_22_2C_22s_22_3Atrue_2C_22i_22_3A_22displayDimensions_22%257D%255D%22))) page.

+You should be able to see a pre-populated query for listing the CPUs and SSDs in the `us-central1` region by default. If you plan to deploy Texera in another region, you need to change the `Dimensions` part of the query.

+ +If your quota does not have at least 20 CPUs and 1 TB SSD, you need to request a quota increase by clicking the 3-dot button on the right -> "Edit Quota".

+

+If your quota does not have at least 20 CPUs and 1 TB SSD, you need to request a quota increase by clicking the 3-dot button on the right -> "Edit Quota".

+ +

+

+---

+## 1. Create an Autopilot GKE cluster

+

+> 💡 Note: If you already have a GKE cluster and wish to use it for deploying Texera, you can skip this step and proceed directly to Step 2.

+

+Navigate to GCP console -> Kubernetes Engine -> [Clusters](https://console.cloud.google.com/kubernetes/list/overview). Click on the `create` button.

+

+> 💡 Note: You may need to enable the Kubernetes API if you haven't done so.

+

+

+

+

+---

+## 1. Create an Autopilot GKE cluster

+

+> 💡 Note: If you already have a GKE cluster and wish to use it for deploying Texera, you can skip this step and proceed directly to Step 2.

+

+Navigate to GCP console -> Kubernetes Engine -> [Clusters](https://console.cloud.google.com/kubernetes/list/overview). Click on the `create` button.

+

+> 💡 Note: You may need to enable the Kubernetes API if you haven't done so.

+

+ +

+Use all default values to create a cluster. You can also customize the cluster accordingly if needed.

+After 15-20 minutes, you should be able to see the status of your cluster to be in a green checkmark(

+

+Use all default values to create a cluster. You can also customize the cluster accordingly if needed.

+After 15-20 minutes, you should be able to see the status of your cluster to be in a green checkmark( ) state, with 0 vCPUs and 0 memory usage.

+

) state, with 0 vCPUs and 0 memory usage.

+ +Click the three dots on the right, and choose "connect".

+

+Click the three dots on the right, and choose "connect".

+ +

+---

+

+In the pop-up window, copy the **project** and **region** to your clipboard. Then click **"Run in Cloud Shell"**.

+Press Enter for the first command shown on the terminal.

+

+

+---

+

+In the pop-up window, copy the **project** and **region** to your clipboard. Then click **"Run in Cloud Shell"**.

+Press Enter for the first command shown on the terminal.

+ +

+

+## 2. Reserve Two Static IPs (for Texera website and MinIO)

+

+After accessing your cluster using Cloud Shell, define the following variables based on your region and project in Step 1.

+```bash

+REGION=""

+PROJECT=""

+```

+

+Execute the following bash commands to reserve two Public IP addresses.

+

+```bash

+gcloud compute addresses create texera-ip --region=$REGION --project=$PROJECT

+TEXERA_IP=$(gcloud compute addresses describe texera-ip --region=$REGION --format="get(address)" --project $PROJECT)

+gcloud compute addresses create minio-ip --region=$REGION --project=$PROJECT

+MINIO_IP=$(gcloud compute addresses describe minio-ip --region=$REGION --format="get(address)" --project $PROJECT)

+```

+

+Execute the following bash commands to create two nginx controllers with helm.

+```bash

+helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

+helm repo update

+helm install nginx-texera ingress-nginx/ingress-nginx \

+ --namespace texera --create-namespace \

+ --set controller.ingressClassResource.name=nginx \

+ --set controller.ingressClassResource.controllerValue="k8s.io/ingress-nginx" \

+ --set controller.ingressClass=nginx \

+ --set controller.service.loadBalancerIP=$TEXERA_IP \

+ --set controller.service.annotations."cloud\.google\.com/load-balancer-type"="External" \

+ --set rbac.create=true

+

+helm install nginx-minio ingress-nginx/ingress-nginx \

+ --namespace texera \

+ --set controller.ingressClassResource.name=nginx-minio \

+ --set controller.ingressClassResource.controllerValue="k8s.io/nginx-minio" \

+ --set controller.ingressClass=nginx-minio \

+ --set controller.service.loadBalancerIP=$MINIO_IP \

+ --set controller.service.annotations."cloud\.google\.com/load-balancer-type"="External" \

+ --set rbac.create=true

+```

+

+---

+

+## 3. Prepare Texera Installation

+

+Execute the following bash commands.

+

+```bash

+curl -L -o texera.zip https://github.com/Texera/texera/releases/download/1.1.0/texera-cluster-1-1-0-release.zip

+unzip texera.zip -d texera-cluster

+rm texera.zip

+helm dependency build texera-cluster

+```

+

+---

+

+## 4. Deploy Texera

+

+Execute the following bash command.

+

+```bash

+helm install texera texera-cluster --namespace texera --create-namespace \

+ --set postgresql.primary.persistence.storageClass=standard-rwo \

+ --set ingress-nginx.enabled=false \

+ --set metrics-server.enabled=false \

+ --set exampleDataLoader.enabled=false \

+ --set minio.customIngress.enabled=true \

+ --set minio.customIngress.ingressClassName=nginx-minio \

+ --set minio.customIngress.texeraHostname="http://$TEXERA_IP" \

+ --set minio.persistence.storageClass=standard-rwo \

+ --set-string lakefs.lakefsConfig="$(cat <`.

+

+

+**To remove the Texera deployment from your Kubernetes cluster, execute the following bash commands.**

+

+```

+helm uninstall texera -n texera

+helm uninstall nginx-texera -n texera

+helm uninstall nginx-minio -n texera

+```

+> Note: You also need to release the 2 allocated IP addresses on [GCP](https://console.cloud.google.com/networking/addresses/list)

+

+---

+

+## Advanced Configuration

+You can customize the deployment by adding the following --set flags to your helm install command. These flags allow you to configure authentication, resource limits, and the number of pods for Texera deployment.

+

+### Texera Credentials

+Texera relies on Postgres, MinIO and LakeFS that require credentials. You can change the default values to make your deployment more secure.

+

+**Default Texera Admin User**

+

+Texera ships with a built-in administrator account (username: texera, password: texera).

+To supply your own credentials during installation, pass the following Helm overrides:

+

+```bash

+# USER_SYS_ADMIN_USERNAME

+--set texeraEnvVars[0].value="" \

+# USER_SYS_ADMIN_PASSWORD

+--set texeraEnvVars[1].value="" \

+```

+

+**MinIO Authentication**

+```

+--set minio.auth.rootUser=texera_minio \

+--set minio.auth.rootPassword=password \

+```

+

+**PostgreSQL Authentication (username is always postgres)**

+```

+--set postgresql.auth.postgresPassword=root_password \

+```

+

+> 💡 Note: If you change the PostgreSQL password, you also need to change the following and add it to the install command:

+

+

+

+## 2. Reserve Two Static IPs (for Texera website and MinIO)

+

+After accessing your cluster using Cloud Shell, define the following variables based on your region and project in Step 1.

+```bash

+REGION=""

+PROJECT=""

+```

+

+Execute the following bash commands to reserve two Public IP addresses.

+

+```bash

+gcloud compute addresses create texera-ip --region=$REGION --project=$PROJECT

+TEXERA_IP=$(gcloud compute addresses describe texera-ip --region=$REGION --format="get(address)" --project $PROJECT)

+gcloud compute addresses create minio-ip --region=$REGION --project=$PROJECT

+MINIO_IP=$(gcloud compute addresses describe minio-ip --region=$REGION --format="get(address)" --project $PROJECT)

+```

+

+Execute the following bash commands to create two nginx controllers with helm.

+```bash

+helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

+helm repo update

+helm install nginx-texera ingress-nginx/ingress-nginx \

+ --namespace texera --create-namespace \

+ --set controller.ingressClassResource.name=nginx \

+ --set controller.ingressClassResource.controllerValue="k8s.io/ingress-nginx" \

+ --set controller.ingressClass=nginx \

+ --set controller.service.loadBalancerIP=$TEXERA_IP \

+ --set controller.service.annotations."cloud\.google\.com/load-balancer-type"="External" \

+ --set rbac.create=true

+

+helm install nginx-minio ingress-nginx/ingress-nginx \

+ --namespace texera \

+ --set controller.ingressClassResource.name=nginx-minio \

+ --set controller.ingressClassResource.controllerValue="k8s.io/nginx-minio" \

+ --set controller.ingressClass=nginx-minio \

+ --set controller.service.loadBalancerIP=$MINIO_IP \

+ --set controller.service.annotations."cloud\.google\.com/load-balancer-type"="External" \

+ --set rbac.create=true

+```

+

+---

+

+## 3. Prepare Texera Installation

+

+Execute the following bash commands.

+

+```bash

+curl -L -o texera.zip https://github.com/Texera/texera/releases/download/1.1.0/texera-cluster-1-1-0-release.zip

+unzip texera.zip -d texera-cluster

+rm texera.zip

+helm dependency build texera-cluster

+```

+

+---

+

+## 4. Deploy Texera

+

+Execute the following bash command.

+

+```bash

+helm install texera texera-cluster --namespace texera --create-namespace \

+ --set postgresql.primary.persistence.storageClass=standard-rwo \

+ --set ingress-nginx.enabled=false \

+ --set metrics-server.enabled=false \

+ --set exampleDataLoader.enabled=false \

+ --set minio.customIngress.enabled=true \

+ --set minio.customIngress.ingressClassName=nginx-minio \

+ --set minio.customIngress.texeraHostname="http://$TEXERA_IP" \

+ --set minio.persistence.storageClass=standard-rwo \

+ --set-string lakefs.lakefsConfig="$(cat <`.

+

+

+**To remove the Texera deployment from your Kubernetes cluster, execute the following bash commands.**

+

+```

+helm uninstall texera -n texera

+helm uninstall nginx-texera -n texera

+helm uninstall nginx-minio -n texera

+```

+> Note: You also need to release the 2 allocated IP addresses on [GCP](https://console.cloud.google.com/networking/addresses/list)

+

+---

+

+## Advanced Configuration

+You can customize the deployment by adding the following --set flags to your helm install command. These flags allow you to configure authentication, resource limits, and the number of pods for Texera deployment.

+

+### Texera Credentials

+Texera relies on Postgres, MinIO and LakeFS that require credentials. You can change the default values to make your deployment more secure.

+

+**Default Texera Admin User**

+

+Texera ships with a built-in administrator account (username: texera, password: texera).

+To supply your own credentials during installation, pass the following Helm overrides:

+

+```bash

+# USER_SYS_ADMIN_USERNAME

+--set texeraEnvVars[0].value="" \

+# USER_SYS_ADMIN_PASSWORD

+--set texeraEnvVars[1].value="" \

+```

+

+**MinIO Authentication**

+```

+--set minio.auth.rootUser=texera_minio \

+--set minio.auth.rootPassword=password \

+```

+

+**PostgreSQL Authentication (username is always postgres)**

+```

+--set postgresql.auth.postgresPassword=root_password \

+```

+

+> 💡 Note: If you change the PostgreSQL password, you also need to change the following and add it to the install command:

+--set lakefs.secrets.databaseConnectionString="postgres://postgres:root_password@texera-postgresql:5432/texera_lakefs?sslmode=disable" \

+

+**LakeFS Authentication**

+```

+--set lakefs.auth.username=texera-admin \

+--set lakefs.auth.accessKey=AKIAIOSFOLKFSSAMPLES \

+--set lakefs.auth.secretKey=wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY \

+--set lakefs.secrets.authEncryptSecretKey=random_string_for_lakefs \

+```

+

+### Allocating Resources

+If your cluster has more available resources, you can allocate additional CPU, memory, and disks to Texera to improve the performance.

+

+**Postgres**

+

+To allocate more CPU, Memory and disk to Postgres, do:

+```

+--set postgresql.primary.resources.requests.cpu=4 \

+--set postgresql.primary.resources.requests.memory=4Gi \

+--set postgresql.primary.persistence.size=50Gi \

+```

+

+**MinIO**

+

+To increase the storage for user's input dataset, do:

+```

+--set minio.persistence.size=100Gi

+```

+

+**Computing Unit**

+

+To customize options for the computing unit, do:

+```bash

+# MAX_NUM_OF_RUNNING_COMPUTING_UNITS_PER_USER

+--set texeraEnvVars[5].value="2" \

+# CPU_OPTION_FOR_COMPUTING_UNIT

+--set texeraEnvVars[6].value="1,2,4" \

+# MEMORY_OPTION_FOR_COMPUTING_UNIT

+--set texeraEnvVars[7].value="2Gi,4Gi,16Gi" \

+# GPU_LIMIT_OPTIONS

+--set texeraEnvVars[8].value="0,1" \ # to allow 0 or 1 GPU resource to be allocated

+```

+

+### Adjusting Number of Pods

+Scale out individual services for high availability or increased performance:

+

+```

+--set webserver.numOfPods=2 \

+--set workflowCompilingService.numOfPods=2 \

+--set pythonLanguageServer.replicaCount=2 \

+```

+

+

+### Retaining User Data

+By default, all user data stored by Texera will be deleted when the cluster deployment is removed. Since user data is valuable, you can preserve all datasets and files even after uninstalling the cluster by setting:

+```

+--set persistence.removeAfterUninstall=false

+```

\ No newline at end of file

diff --git a/texera.wiki/Guide-for-Developers.md b/texera.wiki/Guide-for-Developers.md

new file mode 100644

index 00000000000..50e68c55741

--- /dev/null

+++ b/texera.wiki/Guide-for-Developers.md

@@ -0,0 +1,352 @@

+## 0. Requirements

+

+#### **Java 11 JDK**

+

+Install `Java JDK 11 (Java Development Kit)` (recommend: `[adoptopenjdk](https://adoptium.net/installation/)`). To verify the installation, run:

+```console

+java -version

+```

+

+Next, set `JAVA_HOME`. On macOS you can run:

+```

+export JAVA_HOME=$(/usr/libexec/java_home -v 11)

+```

+On Windows, add a system environment variable called `JAVA_HOME` that points to the JDK directory.

+

+#### Python@3.12/3.11/3.10

+

+Install Python 3.12 (or 3.11/3.10) from the official site or your preferred package manager.

+

+#### **Git**

+

+On Windows, install the software from https://gitforwindows.org/. `Git Bash` is available after installing `Git`.

+

+On Mac and Linux, see https://git-scm.com/book/en/v2/Getting-Started-Installing-Git

+

+Verify the installation by:

+```console

+git --version

+```

+

+#### **sbt (Scala Build Tool)**

+

+Install `sbt` for building the project. Please refer to [sbt Reference Manual — Installing sbt](https://www.scala-sbt.org/1.x/docs/Setup.html). We recommend you to use [sdkman](https://sdkman.io/install) to install sbt.

+

+Verify the installation by:

+```console

+sbt --version

+```

+

+If the above command fails on Windows after installation, it is recommended to restart your computer.

+

+#### **node LTS Version > 18.x**

+

+Install an LTS version (not the latest) of `node`. Currently, we require LTS version > 18.x.

+

+On Windows, install from [https://nodejs.org/en/](https://nodejs.org/en/).

+

+On Mac and Linux, [use NVM to install NodeJS](https://www.linode.com/docs/guides/how-to-install-use-node-version-manager-nvm/) as it avoids permission issues.

+

+Verify the installation by:

+```console

+node -v

+```

+

+#### **Angular 16 Cli**

+

+Install the angular 16 cli globally:

+```console

+npm install -g @angular/cli@16

+```

+

+Verify the installation by:

+```console

+ng version

+```

+

+

+

+

+## 1. Setup Backend Development.

+

+

+

+### Clone and Configure Texera

+

+In the terminal, clone the Texera repo:

+```console

+git clone git@github.com:Texera/texera.git

+```

+

+Do the following changes to the configuration files:

+- Edit `common/config/src/main/resources/storage.conf` to use your Postgres credentials.

+```diff

+ jdbc {

+

+- username = "postgres"

++ username =

+ username = ${?STORAGE_JDBC_USERNAME}

+

+- password = "postgres"

++ password =

+ password = ${?STORAGE_JDBC_PASSWORD}

+ }

+```

+

+- Edit `common/config/src/main/resources/udf.conf` to use the correct python executable path(can be obtained by command `which python` or `where python`):

+```diff

+python {

+- path =

++ path = "/the/executable/path/of/python"

+}

+```

+

+### Setup PostgreSQL locally

+

+Texera uses [PostgreSQL](https://www.postgresql.org/) to manage the user data and system metadata. To install and configure it:

+Install [Postgres](https://www.postgresql.org/download/). If you are using Mac, simply execute:

+```console

+brew install postgresql

+```

+

+Install [Pgroonga](https://pgroonga.github.io/install/) for enabling full-text search, if you are using Mac, simply execute:

+```console

+brew install pgroonga

+```

+

+Execute `sql/texera_ddl.sql` to create `texera_db` database for storing user system data & metadata storage

+```console

+psql -U postgres -f "sql/texera_ddl.sql"

+```

+Execute `sql/iceberg_postgres_catalog.sql` to create the database for storing Iceberg catalogs.

+```console

+psql -U postgres -f "sql/iceberg_postgres_catalog.sql"

+```

+

+### Setup the LakeFS+Minio locally

+

+Texera requires [LakeFS](https://lakefs.io/) and S3([Minio](https://min.io/docs/minio/kubernetes/upstream/index.html) is one of the implementations) as the dataset storage. Setting up these two storage services locally are required to make Texera's dataset feature functioning.

+

+Install [Docker Desktop](https://docs.docker.com/desktop/setup/install/mac-install/) which contains both docker engine and docker compose. Make sure you launch the Docker after installing it.

+

+In the terminal, enter the directory containing the docker-compose file:

+```

+cd file-service/src/main/resources

+```

+

+Edit `docker-compose.yml` by: search for `volumes` in the file and follow the instructions in the comment. This step is required otherwise your data will be lost if containers are deleted

+

+Execute the following command to start LakeFS and Minio:

+```

+docker compose up

+```

+

+### Import the project into IntelliJ

+

+

+Before you import the project, you need to have "Scala", and "SBT Executor" plugins installed in Intellij.

+ +

+

+1. In Intellij, open `File -> New -> Project From Existing Source`, then choose the `texera` folder.

+2. In the next window, select `Import Project from external model`, then select `sbt`.

+3. In the next window, make sure `Project JDK` is set. Click OK.

+4. IntelliJ should import and build this Scala project. In the terminal under `texera`, run:

+```

+sbt clean protocGenerate

+```

+This will generate proto-specified codes. And the IntelliJ indexing should start. Wait until the indexing and importing is completed. And on the right, you can open the sbt tab and check the loaded `texera` project and couple of sub projects:

+

+

+

+

+1. In Intellij, open `File -> New -> Project From Existing Source`, then choose the `texera` folder.

+2. In the next window, select `Import Project from external model`, then select `sbt`.

+3. In the next window, make sure `Project JDK` is set. Click OK.

+4. IntelliJ should import and build this Scala project. In the terminal under `texera`, run:

+```

+sbt clean protocGenerate

+```

+This will generate proto-specified codes. And the IntelliJ indexing should start. Wait until the indexing and importing is completed. And on the right, you can open the sbt tab and check the loaded `texera` project and couple of sub projects:

+

+ +

+5. When IntelliJ prompts "Scalafmt configuration detected in this project" in the bottom right corner, select "Enable".

+If you missed the IntelliJ prompt, you can check the `Event Log` on the bottom right

+

+6. In addition to the microservices, you need to run the `JooqCodeGenerator` located at `common/dao/src/main/scala/org/apache/texera/dao/JooqCodeGenerator.scala` before starting the microservices for the first time, or each time you make changes to the database.

+

+### Run the backend micro services in IntelliJ

+The easiest way to run backend services is in IntelliJ.

+Currently we have couple of micro services for different purposes. If one microservice failed after running, it may have dependency to another microservice, so wait for other ones to start, also make sure to run LakeFS docker compose:

+

+| **Component** | **File Path** | **Purpose / Functionality** |

+|---|---|---|

+| **ConfigService** | `config-service/src/main/scala/`

+

+5. When IntelliJ prompts "Scalafmt configuration detected in this project" in the bottom right corner, select "Enable".

+If you missed the IntelliJ prompt, you can check the `Event Log` on the bottom right

+

+6. In addition to the microservices, you need to run the `JooqCodeGenerator` located at `common/dao/src/main/scala/org/apache/texera/dao/JooqCodeGenerator.scala` before starting the microservices for the first time, or each time you make changes to the database.

+

+### Run the backend micro services in IntelliJ

+The easiest way to run backend services is in IntelliJ.

+Currently we have couple of micro services for different purposes. If one microservice failed after running, it may have dependency to another microservice, so wait for other ones to start, also make sure to run LakeFS docker compose:

+

+| **Component** | **File Path** | **Purpose / Functionality** |

+|---|---|---|

+| **ConfigService** | `config-service/src/main/scala/`

`org/apache/texera/service/`

`ConfigService.scala` | Hosts the system configurations to allow the frontend to retrieve configuration data. |

+| **TexeraWebApplication** | `amber/src/main/scala/`

`org/apache/texera/web/`

`TexeraWebApplication.scala` | Provides user login, community resource read/write operations, and loads metadata for available operators. |

+| **FileService** | `file-service/src/main/scala/`

`org/apache/texera/service/`

`FileService.scala` | Provides dataset-related endpoints including dataset management, access control, and read/write operations across datasets. |

+| **WorkflowCompilingService** | `workflow-compiling-service/src/main/scala/`

`org/apache/texera/service/`

`WorkflowCompilingService.scala` | Propagates schema and checks for static errors during workflow construction. |

+| **ComputingUnitMaster** | `amber/src/main/scala/`

`org/apache/texera/web/`

`ComputingUnitMaster.scala` | Manages workflow execution and acts as the master node of the computing cluster.

**Must start before `ComputingUnitWorker`.** |

+| **ComputingUnitWorker** | `amber/src/main/scala/`

`org/apache/texera/web/`

`ComputingUnitWorker.scala` | A worker node in the computing cluster (not a web server). |

+| **ComputingUnitManagingService** | `computing-unit-managing-service/src/main/scala/`

`org/apache/texera/service/`

`ComputingUnitManagingService.scala` | Manages the lifecycle of different types of computing units and their connections to users' frontends. |

+| **AccessControlService** | `access-control-service/src/main/scala/`

`org/apache/texera/service/`

`AccessControlService.scala` | Authorize requests sent to computing unit, currently not needed to run for local development, it is only used in Kubernetes setup. |

+

+

+

+To run each of the above web service, go to the corresponding scala file(i.e. for `TexeraWebApplication`, go find TexeraWebApplication.scala), then run the main function by pressing on the green run button and wait for the process to start up.

+

+For `TexeraWebApplication`, the following message indicates that it is successfully running:

+```

+[main] [akka.remote.Remoting] Remoting now listens on addresses:

+org.eclipse.jetty.server.Server: Started

+```

+* If IntelliJ displays CreateProcess error=206, the filename or extension is too long : [add the -Didea.dynamic.classpath=true in Help | Edit Custom VM Options and restart the IDE](https://youtrack.jetbrains.com/issue/IDEA-285090)

+

+

+For `ComputingUnitMaster`, the following prompt indicates that it is successfully running:

+

+```

+---------Now we have 1 node in the cluster---------

+```

+

+### Enable Python-based Operators

+

+Texera has lots of Python-based operators like visualizations, and UDF operators. To enable them, install python dependencies by executing, you also need to install R in your system:

+```console

+cd texera

+pip install -r amber/requirements.txt -r amber/operator-requirements.txt

+```

+

+

+

+

+

+

+

+

+## 2. Launch Frontend

+

+This is for developers that work on the frontend part of the project. This step is NOT needed if you develop the backend only.

+

+Before you start, make sure the backend services are all running.

+

+### Install Angular CLI

+```console

+cd frontend

+yarn install

+```

+

+Ignore those warnings (warnings are usually marked in yellow color or start with `WARN`).

+

+### Launch Frontend in IntelliJ for local development

+

+1. Click on the Green Run button next to the `start` in `frontend/package.json`.

+2. Wait for some time and the server will get started. Open a browser and access `http://localhost:4200`. You should see the Texera UI with a canvas.\

+

+ +

+

+Every time you save the changes to the frontend code, the browser will automatically refresh to show the latest UI.

+You can also run frontend using command line:

+```console

+yarn start

+```

+

+### Launch Frontend in the production environment

+

+Run the following command

+```

+yarn run build

+```

+This command will optimize the frontend code to make it run faster. This step will take a while. After that, start the backend engine in IntelliJ and use your browser to access `http://localhost:8080`.

+

+

+

+

+

+Every time you save the changes to the frontend code, the browser will automatically refresh to show the latest UI.

+You can also run frontend using command line:

+```console

+yarn start

+```

+

+### Launch Frontend in the production environment

+

+Run the following command

+```

+yarn run build

+```

+This command will optimize the frontend code to make it run faster. This step will take a while. After that, start the backend engine in IntelliJ and use your browser to access `http://localhost:8080`.

+

+

+

+

+

+

+

+

+## 3. Email Notification (Optional)

+

+

+1. Set `smtp` in `config/src/main/resources/user-system.conf`. You need an App password if the account has 2FA.

+2. Log in to Texera with an admin account.

+3. Open the Gmail dashboard under the admin tab.

+5. Send a test email.

+

+

+

+

+

+

+## 4. Misc

+

+

+

+This part is optional; you only need to do this if you are working on a specific task.

+

+### To create a new database table and write queries using Java through Jooq

+1. Create the needed new table in MySQL and update `sql/texera_ddl.sql` to include the new table.

+2. Run `common/dao/src/main/scala/org/apache/texera/dao/JooqCodeGenerator.scala` to generate the classes for the new table.

+

+Note: Jooq creates DAO for simple operations if the requested SQL query is complex, then the developer can use the generated Table classes to implement the operation

+

+### Disable password login

+Edit `config/src/main/resources/gui.conf`, change `local-login` to `false`.

+

+### Enforce invite only

+Edit `config/src/main/resources/user-system.conf`, change `invite-only` to `true`.

+

+### Backend endpoints Role Annotation

+There are two types of permissions for the backend endpoints:

+1. @RolesAllowed(Array("Role"))

+2. @PermitAll

+Please don't leave the permission setting blank. If the permission is missing for an endpoint, it will be @PermitAll by default.

+

+### **Windows: enable long paths**

+

+Some workflows create deep directories (e.g., when writing `metadata.json` via Python/ICEBERG). On Windows, this can exceed the legacy `MAX_PATH` (~260 chars) and cause failures like:

+

+```

+[WinError 3] The system cannot find the path specified.

+```

+

+Enable long paths support (per machine) by running PowerShell **as Administrator**:

+

+```powershell

+New-ItemProperty -Path "HKLM:\SYSTEM\CurrentControlSet\Control\FileSystem" -Name "LongPathsEnabled" -Value 1 -PropertyType DWORD -Force

+```

+

+Verify the setting (expected value: `1`):

+

+```powershell

+Get-ItemProperty -Path "HKLM:\SYSTEM\CurrentControlSet\Control\FileSystem" -Name "LongPathsEnabled"

+```

+

+> If you cannot change this policy (e.g., on managed devices), keep your workspace path short (e.g., `C:\src\texera`) to reduce overall path length.

+

+### **Windows: Fix `HADOOP_HOME` errors**

+

+On Windows, if you encounter the following error when executing a workflow:

+

+```

+Caused by: java.io.FileNotFoundException: HADOOP_HOME and hadoop.home.dir are unset

+```

+

+here are the steps to solve this issue:

+

+**Steps**

+

+1. Obtain a `winutils.exe` matching your Hadoop line (Texera currently uses Hadoop 3.3.x).

+ - Suggested source (use any equivalent source approved for your environment):

+ https://github.com/cdarlint/winutils/tree/master/hadoop-3.3.5/bin

+2. Create the directory and place the binary:

+ ```

+ C:\hadoop\bin\winutils.exe

+ ```

+3. In IntelliJ, add this **VM option** to the **FileService** run configuration:

+ ```

+ -Dhadoop.home.dir="C:\hadoop"

+ ```

+4. (Optional) Also set a system environment variable and restart the IDE/terminal:

+ ```

+ HADOOP_HOME=C:\hadoop

+ ```

+

+**Notes**

+

+- This issue may happen only on **Windows**; macOS/Linux do not need `winutils.exe`.

+- Ensure the `winutils.exe` you use matches your Hadoop major/minor (e.g., 3.3.x).

+- After configuring, the prior read/write and “unset” errors should disappear.

+

+

+

\ No newline at end of file

diff --git a/texera.wiki/Guide-for-how-to-use-Texera.md b/texera.wiki/Guide-for-how-to-use-Texera.md

new file mode 100644

index 00000000000..80808f7ad5f

--- /dev/null

+++ b/texera.wiki/Guide-for-how-to-use-Texera.md

@@ -0,0 +1,41 @@

+Texera is an open-source system that supports collaborative data science at scale using Web-based workflows. This page includes instructions on how to install the system as a developer and do a simple workflow.

+

+## Prerequisites

+We assume you either went through [Single Node Instruction](https://github.com/Texera/texera/wiki/Installing-Texera-on-a-Single-Node), or the [Guide for Texera Developers](https://github.com/Texera/texera/wiki/Guide-for-Developers). And Texera is up-and-running on your laptop.

+

+## Access Texera through Browser

+Enter Texera's URL on your browser to access Texera.

+

+An admin account with username `texera` and password `texera` is pre-created by default. Input the username, password and click the `Sign in` button to login as the admin:

+

+

+### User Dashboard UI Overview

+Once logged in, you should see the below page:

+

+

+This is Texera's dashboard page. On the left navigation bar, you can switch between different resource modules, including

+- `Workflows` for workflow management

+- `Datasets` for dataset management

+- `Quota` for checking the usage statistics

+- `Admin` for managing users on the Texera system. This tab is only visible for system admins.

+

+### Workflow Workspace UI Overview

+

+

+

+1. **Operator Library/Menu**:

+

+ It is separated into multiple dropdown menus based on the operator type, e.g., Source Operator, Search Operator, etc. You can drag and drop an operator from these dropdown menus onto the Workflow Canvas.

+

+2. **Workflow Canvas**:

+

+ It is the main playground, where you can drag and drop Operators from the Operator Library onto it. Each operator is shown as a square box and connected with other operators with arrowed links which indicates the data flow.

+

+3. **Properties Editor Panel**:

+

+ The panel will show up when you highlight a specific operator (by clicking on it) in the Workflow Canvas. You can customize the properties of the selected operator, for example, set the keyword for a filter. When the selected operator is configured correctly, a green ring will surround it; while a red ring usually indicates an error in configuration or connection to other operators.

+

+4. **Result Panel**:

+

+ By default or when there is no result, it is hidden. You can click on the little UP arrow to expand this panel. When a workflow is finished running, the result panel will pop up with the data. You may slide up and down or left and right to view the data inside the panel.

+

diff --git a/texera.wiki/Guide-to-Frontend-Development-(new-gui).md b/texera.wiki/Guide-to-Frontend-Development-(new-gui).md

new file mode 100644

index 00000000000..997d9fd1575

--- /dev/null

+++ b/texera.wiki/Guide-to-Frontend-Development-(new-gui).md

@@ -0,0 +1,47 @@

+**Author: Yinan Zhou**

+

+# Introduction:

+ If you are new to Texera frontend development team or have little frontend experience using angular framework (version 6), this read intends to provide you with a simple guide of how to get started.

+

+# Preparation phase:

+ In a nutshell, angular provides modularity, scalability, and robustness to traditional frontend code design. It separates a website into different individual components that can each perform a certain level of independent tasks. It then connects different components with services so they can work collaboratively. It also provides unit testing at the component level as well as application level.

+ Other than these, angular largely inherits the traditional way of creating a web page. Each component contains four foundational files (.ts | .html | .css | spec.ts), corresponding to typescript (which is basically JavaScript with better scalability), HTML, CSS, and unit testing respectively. Just like how web pages were traditionally written, you will be coding in

+ 1) html: the structure of the component

+ 2) css: the style of the component

+ 3) typescript: the content of the component

+and additionally:

+ 4) unit tests: so that the component can be debugged in the future if it breaks

+

+Don’t be overwhelmed. You don't have to be a master in all these four fields to start working on texera frontend. If you have basic web development experience, you can jump to the next section to get started with learning angular. If you have no such experience, you should at least spend a few hours getting familiar with HTML, CSS, and JavaScript. The following links might be helpful.

+* An overview of HTML: https://www.youtube.com/watch?v=LcS5IgnAeUs

+* An overview of CSS: https://www.youtube.com/watch?v=Eogk9jWYeMk

+* Simple JavaScript example: https://www.youtube.com/watch?v=LFa9fnQGb3g

+

+The following links are documentation and examples, don't try to master all the knowledge from these websites at once, use them as dictionaries. They will be helpful when you start coding so don't waste too much time on them now.

+* HTML: https://www.w3schools.com/html/

+* Typescript: https://www.tutorialspoint.com/typescript/typescript_overview.htm

+* CSS: https://www.w3schools.com/Css/

+

+# Angular Tutorial Phase:

+At this point, you should at least be able to interpret an HTML/CSS/Typescript file with your own knowledge and the information you can find online. For the next few weeks,

+ 1) go through the tutorial provided on the Angular official website, https://angular.io/guide/quickstart

+ 2) watch tutorial videos, (ask frontend group leader to share the videos with you on google drive)

+ 3) especially pay attention to the rxjs videos, you will need them a lot.

+

+ Although these tutorial videos are helpful, it can take a long time to finish watching them. Meanwhile, it is easy to forget what you have learned if you do not practice coding it. Therefore, I recommend you begin the next phase once you finish step 1.

+

+# Frontend Code Base:

+At this point, you should know how to approach a simple angular application and interpret it using your own knowledge and the information you can find online. Download Visual Studio Code and relevant extensions, get access to Texera front-end code base (instructions can be found here). You should:

+ 1) have a general understanding of the structure of the new-gui, what components are there? What do they do? What services are connecting them.

+ 2) You should have a feature in mind that you want to implement. Locate the component and services that are relevant to the feature you want to implement. Carefully read through the code in those sections, make sure you understand what is going on behind the scene.

+ 3) Start coding, then debug, and repeat. :)

+ 4) Look for solutions in the tutorial videos I mentioned in the previous phase step 2&3 when you have questions.

+ 5) Make good use of google, stack overflow, etc. However, be aware that a lot of code examples online can be outdated since we are using the most recent version of angular with rxjs.

+

+useful tips that you should know how:

+ 1) Right-click a variable/class/method name in the code base in visual studio code, then click "Peek Definition" or "Find All References". It shows you how it was defined and where it has been used.

+ 2) Right-click web page and inspect elements

+ 3) You can Console.log(ThingsYouWantToInspect) in the code base; the logged information will appear in the console window after you do step 2.

+

+# Unit testing:

+Don’t worry about unit testing at the beginning. Finish the feature first and then write unit tests for it.

\ No newline at end of file

diff --git a/texera.wiki/Guide-to-Implement-a-Java-Native-Operator.md b/texera.wiki/Guide-to-Implement-a-Java-Native-Operator.md

new file mode 100644

index 00000000000..aa01163ddf1

--- /dev/null

+++ b/texera.wiki/Guide-to-Implement-a-Java-Native-Operator.md

@@ -0,0 +1,278 @@

+

+In this page, we'll explain the basic concepts in Texera and use examples to show how to implement an operator.

+

+### Code structure of every operator:

+

+Every operator ideally has three classes that are found in each operator package in `core\workflow-operator\src\main\scala\edu\uci\ics\amber\operator`

+* LogicalOp

+* OperatorExecutor

+* OperatorExecutorConfig

+

+### Basic concepts:

+

+A Texera user constructs a workflow using the frontend, which consists of many operators. Each operator take input data from its previous operator(s), does some computation, and outputs the results to the next operator(s).

+

+Suppose we have the following sample records, each of which has an ID and a tweet.

+```

+id tweet

+1 "today is a good day"

+2 "weather is bad during the day"

+```

+

+Each row is called a `Tuple`, and each column is called a `Field`.

+

+```scala

+// get the value of a field by column name

+tuple1.getField("id") // result: 1

+tuple1.getField("tweet") // result: "today is a good day"

+

+// get the value by column index

+tuple1.get(0) // result: 1

+```

+

+In this dataset, we have 2 columns, namely `id` of the integer type and `tweet` of the string type. This information is called a `Schema`.

+A `schema` contains a list of `attributes`, and each `attribute` has a `name` (name of the column) and a `type` (data type of the column).

+

+```scala

+schema = tuple.getSchema()

+schema.getAttributes().get(0) // Attribute("id", AttributeType.Integer)

+schema.getAttributes().get(1) // Attribute("tweet", AttributeType.String)

+```

+

+

+### Example 1: Regular Expression (regex) operator

+

+A regular expression operator matches a regular expression (regex) on each input tuple. For example, if we search the regex "weather" on the `tweet` attribute, then only tuple 2 will be the result. In other words, the regular expression operator is a kind of `filter()` operation in many programming languages.

+

+To implement a regular expression operator, you will first need to write an `LogicalOp`. The following code is part of class [`RegexOpDesc`](https://github.com/apache/texera/blob/main/core/workflow-operator/src/main/scala/edu/uci/ics/amber/operator/regex/RegexOpDesc.scala) .

+

+```scala

+class RegexOpDesc extends FilterOpDesc {

+

+ @JsonProperty(required = true)

+ @JsonSchemaTitle("attribute")

+ @JsonPropertyDescription("column to search regex on")

+ @AutofillAttributeName

+ var attribute: String = _

+

+ @JsonProperty(required = true)

+ @JsonSchemaTitle("regex")

+ @JsonPropertyDescription("regular expression")

+ var regex: String = _

+

+ @JsonProperty(required = false, defaultValue = "false")

+ @JsonSchemaTitle("Case Insensitive")

+ @JsonPropertyDescription("regex match is case sensitive")

+ var caseInsensitive: Boolean = _

+}

+```

+

+The regular expression operator needs to take 3 properties from the user, namely `attribute` (the name of the column to search on), `regex` (the regular expression itself) and `caseInsensitive` (whether case sensitive for this regular expression).

+

+The `@JsonProperty` annotation will let the system know that this property needs to come from the user input, and it will automatically generate the corresponding input form in the frontend.

+Inside `@JsonProperty`, `required = true` tells the frontend that this property is required from the user. The property also needs to provide a user-friendly title (inside `@JsonSchemaTitle` annotation) and a detailed description (inside `@JsonPropertyDescription` annotation). `@AutofillAttributeName` annotation tells the frontend to provide autocomplete on attribute name (name of the column).

+

+This operator descriptor also needs to provide information about this operator, including a user-friendly name, description, the group it belongs to, and number of input/output ports.

+```scala

+ override def operatorInfo: OperatorInfo =

+ OperatorInfo(

+ userFriendlyName = "Regular Expression",

+ operatorDescription = "Search a regular expression in a string column",

+ operatorGroupName = OperatorGroupConstants.SEARCH_GROUP,

+ numInputPorts = 1,

+ numOutputPorts = 1

+ )

+```

+

+Finally, the operator descriptor needs to specify its corresponding operator executor. An `OperatorExecutor`, or `OpExec` for short, contains the implementation of the processing logic in the operator. For the regular expression operator, it corresponds to `RegexOpExec`. The OpDesc supplies an `OpExecInitInfo` with a function that creates the corresponding operator executor `() => new RegexOpExec(this)`. When creating a PhysicalOp (e.g., using `oneToOnePhysicalOp` in this case, which is one type of physical operator that should be used in most cases), the `OpExecInitInfo` is passed in for the PhysicalOp to use.

+

+```scala

+ PhysicalOp.oneToOnePhysicalOp(

+ executionId,

+ operatorIdentifier,

+ OpExecInitInfo(_ => new RegexOpExec(this))

+ )

+```

+

+The implementation of the regular expression operator executor is rather simple. Since this operator is doing a kind of `filter()` operation, it extends a pre-defined class `FilterOpExec`. It calls `setFilterFunc` to specify the filter function used by this operator: the `matchRegex` function. In `matchRegex`, we first get the string value of a column, and then test if the value matches the regex.

+

+```scala

+class RegexOpExec(val opDesc: RegexOpDesc) extends FilterOpExec {

+ val pattern: Pattern = Pattern.compile(opDesc.regex)

+ this.setFilterFunc(this.matchRegex)

+

+ def matchRegex(tuple: Tuple): Boolean = {

+ val tupleValue = tuple.getField(opDesc.attribute).toString

+ return pattern.matcher(tupleValue).find

+ }

+}

+```

+

+This operator needs to be registered to let the system know its existence. In the `LogicalOp` class, we need to add a new entry, which specifies its operator descriptor class and a unique operator name.

+

+```scala

+@JsonSubTypes(

+ Array(

+ new Type(value = classOf[RegexOpDesc], name = "Regex"),

+ )

+)

+abstract class LogicalOp extends PortDescriptor with Serializable {

+}

+```

+

+Now this operator will be automatically available in the frontend. We can now start the system and test this operator.

+

+To add an image for this operator, go to `core/gui/src/assets/operator_images`, then add an image with the _**SAME NAME**_ as what's specified in the operator registration. The image file should be in `png` format, with a transparent background, black and white, and should be square.

+

+For example, for the regex operator, the code `new Type(value = classOf[RegexOpDesc], name = "Regex")` specified a name `Regex`, then the image file name should be `Regex.png`.

+

+

+Summary: we have gone through the steps to implement a simple regular expression operator. This operator is a type of `filter()` operation. So it's built on top of a set of pre-defined classes, `FilterOpDesc`, `FilterOpExec`, and `FilterOpExecConfig`.

+

+### Example 2: Sentiment Analysis operator

+

+A `map()` operation processes one input tuple and produces exactly one output tuple. Next, we'll briefly explain the `map()` type of operators using the Sentiment Analysis operator as an example.

+

+The sentiment analysis operator uses the Stanford NLP package to analyze the sentiment of a text. Given the example dataset above, the output of this operator looks like this:

+```

+id tweet sentiment

+1 "today is a good day" "positive"

+2 "weather is bad during the day" "negative"

+```

+

+

+The following code is the implementation of class [`SentimentAnalysisOpDesc`](https://github.com/apache/texera/blob/main/core/workflow-operator/src/main/scala/edu/uci/ics/amber/operator/huggingFace/HuggingFaceSentimentAnalysisOpDesc.scala) in Java.

+

+```java

+public class SentimentAnalysisOpDesc extends MapOpDesc {

+

+ @JsonProperty(required = true)

+ @JsonSchemaTitle("attribute")

+ @JsonPropertyDescription("column to perform sentiment analysis on")

+ @AutofillAttributeName

+ public String attribute;

+

+ @JsonProperty(value = "result attribute", required = true, defaultValue = "sentiment")

+ @JsonPropertyDescription("column name of the sentiment analysis result")

+ public String resultAttribute;

+

+ @Override

+ public OneToOneOpExecConfig operatorExecutor() {

+ return new OneToOneOpExecConfig(operatorIdentifier(), () -> new SentimentAnalysisOpExec(this));

+ }

+

+ @Override

+ public OperatorInfo operatorInfo() {

+ return new OperatorInfo(

+ "Sentiment Analysis",

+ "analysis the sentiment of a text using machine learning",

+ OperatorGroupConstants.ANALYTICS_GROUP(),

+ 1, 1

+ );

+ }

+

+ @Override

+ public Schema getOutputSchema(Schema[] schemas) {

+ if (resultAttribute == null || resultAttribute.trim().isEmpty()) {

+ return null;

+ }

+ return Schema.newBuilder().add(schemas[0]).add(resultAttribute, AttributeType.STRING).build();

+ }

+}

+```

+

+You'll notice that this operator implements a new function, `getOutputSchema`. This is because this operator adds a new column called `sentiment`. The function `getOutputSchema` returns the output schema produced by this operator given an input schema.

+

+In this implementation, `resultAttribute` is the new column name given by the user (default value is "sentiment"). If the value is empty, we return a null value to indicate that the output schema cannot be produced. The result schema includes all the attributes from the input schema, plus a new attribute of type string.

+

+The regular expression operator does not implement this function because a `filter()` operation does not add or remove any columns.

+

+The implementation of `SentimentAnalysisOpExec` extends `MapOpExec` and provides a map function. You can check the implementation in the codebase.

+

+### Generic operations

+

+In Texera, currently we have 4 pre-defined operations you can extend.

+ - `filter()`: filters out any input tuple if it doesn't satisfy a condition.

+ - `map()`: for each input tuple, transforms it to exactly one output tuple.

+ - `flatmap()`: for each input tuple, transforms it to a list of output tuples.

+ - `aggregate()`: performs an aggregation, such as sum, count, average, etc.

+

+To implement an operator, you can first check if your operator can be implemented using the 4 pre-defined operations. You can find these pre-defined operations under [`texera/workflow/common/operators`](https://github.com/Texera/texera/tree/master/core/amber/src/main/scala/edu/uci/ics/texera/workflow/common/operators). Your own operator implementation should be in [`texera/workflow/operators/youroperator`](https://github.com/Texera/texera/tree/master/core/amber/src/main/scala/edu/uci/ics/texera/workflow/operators).

+

+### Low-level OperatorExecutor API

+For more complicated operators, if they cannot be implemented using these operations, then you need to implement `OperatorExecutor` using the following low-level interface.

+

+```scala

+trait IOperatorExecutor {

+

+ def open(): Unit

+

+ def close(): Unit

+

+ def processTuple(tuple: Either[ITuple, InputExhausted], input: Int): Iterator[ITuple]

+

+}

+```

+

+The `open()` and `close()` functions allow you to initialize and dispose any resources (such as opened files), respectively. They will be called once before and after the whole execution by the engine. The important function is `processTuple`, which implements the processing logic inside the operator.

+

+The `processTuple` function takes two parameters: `tuple` and `input`. Since an operator can have multiple input ports, and each input port can have multiple input operators connected to (e.g., Union), `input: Int` indicates which input port the current tuple is coming from. The parameter `tuple` is either a `Tuple` type or an `InputExhausted` type, indicating all data from an input operator has been exhausted. It returns an `Iterator[Tuple]`, which means zero or more output tuples can be produced following this input. `processTuple` will be called whenever a new input tuple arrives, and called once if the input is exhausted. When an input port is connected to multiple input operators, this `InputExhausted` will be processed multiple times (once per input operator).

+

+## General content:

+### User input information

+Texera's backend is responsible for determining the UI information to the frontend. After receiving the information, the frontend efficiently translates and presents the content.

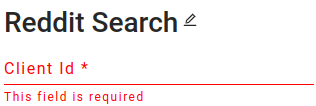

+* Input Box

+

+

+

+ Here is an example of a user input box, with the name “Client Id” and its description.

+ ```python

+ @JsonProperty(required=true)

+ @JsonSchemaTitle("Client Id")

+ @JsonPropertyDescription("Client id that uses to access Reddit API")

+ var clientId: String = _

+ ```

+

+

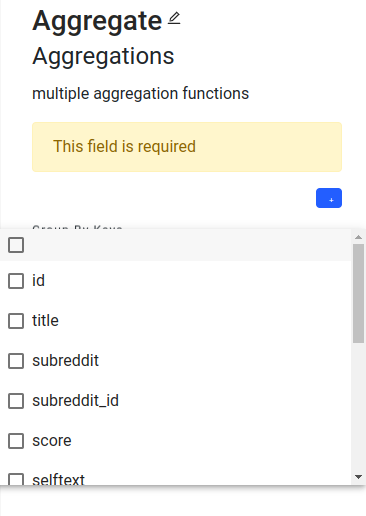

+* Multiple selection

+

+

+

+ Here is an example of a multiple selection in the aggregate operator.

+ ```python

+ @JsonProperty(value = "attribute", required = true)

+ @JsonPropertyDescription("column to calculate average value")

+ @AutofillAttributeName

+ var attribute: String = _

+ ```

+ In the backend, we assign the attribute name list to fill the selections. Since it is multiselection, the type needs to be a list.

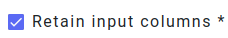

+* Checkbox

+

+

+

+ For the checkbox, we assign the data type to boolean. Here is an example in pythonUDF operator. By setting the data type to boolean, we successfully implement it as a checkbox.

+ ```python

+ @JsonProperty(required = true, defaultValue = "true")

+ @JsonSchemaTitle("Retain input columns")

+ @JsonPropertyDescription("Keep the original input columns?")

+ var retainInputColumns: Boolean = Boolean.box(false)

+ ```

+

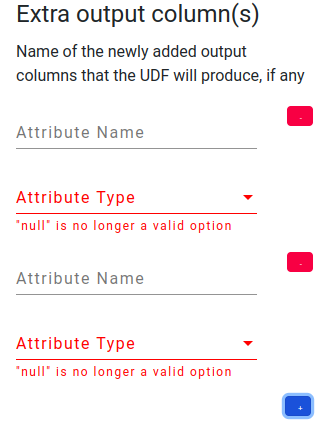

+* List

+

+

+

+ In pythonUDF operator, there is an example of a list, which is for the output schema. By clicking the blue button, we can add one more pair of attribute information. And the red button will delete such attribute information. In the backend, we have a list to hold the attribute values.

+ ```python

+ @JsonProperty

+ @JsonSchemaTitle("Extra output column(s)")

+ @JsonPropertyDescription(

+ "Name of the newly added output columns that the UDF will produce, if any"

+ )

+ var outputColumns: List[Attribute] = List()

+ ```

+

+### Registration and icon

+In the file `amber/src/main/scala/edu/uci/ics/texera/workflow/common/operators/LogicalOp.scala`, you will find a list of all registered operators, complete with their descriptor classes and names. After adding an operator's information, you can assign an icon to it. All operator icons are stored in the `/core/new-gui/src/assets/operator_images` directory. It's essential to ensure that the icon filename matches its respective operator descriptor name.

+

+

diff --git a/texera.wiki/Guide-to-Implement-a-Python-Native-Operator-(converting-from-a-Python-UDF).md b/texera.wiki/Guide-to-Implement-a-Python-Native-Operator-(converting-from-a-Python-UDF).md

new file mode 100644

index 00000000000..bb9523f65ea

--- /dev/null

+++ b/texera.wiki/Guide-to-Implement-a-Python-Native-Operator-(converting-from-a-Python-UDF).md

@@ -0,0 +1,86 @@

+In the [page for PythonUDF](https://github.com/Texera/texera/wiki/Guide-to-Use-a-Python-UDF), we introduced the basic concepts of PythonUDF and described each API. To let other users use the Python operators, it is necessary to implement it as a native operator.

+

+In this section, we will discuss how to implement a Python native operator and let future users drag and drop it on the UI. We will start by implementing a sample UDF then talk about how to convert it to a native operator.

+

+## **Starting with a Sample Python UDF**

+

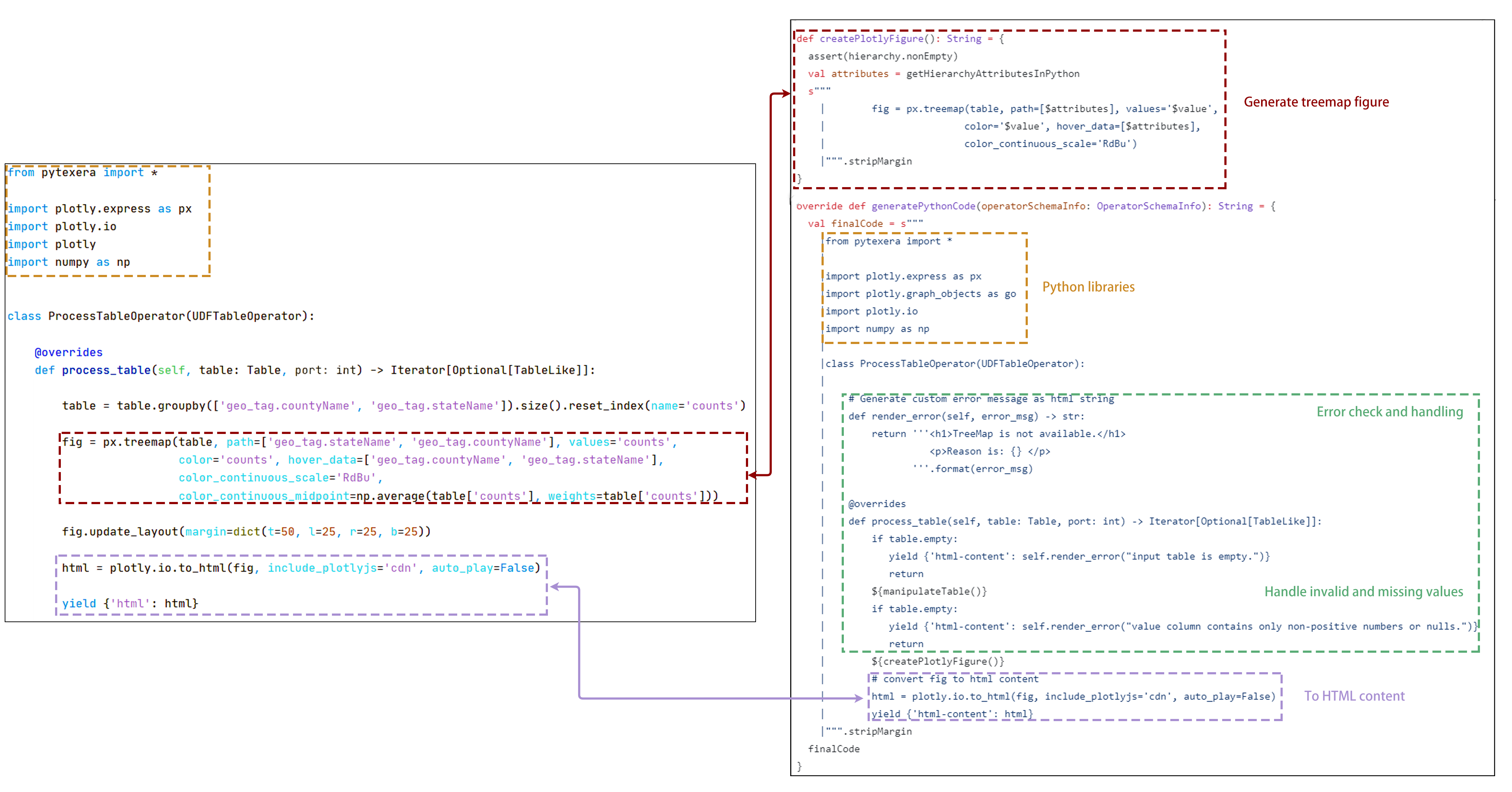

+Suppose we have a sample Python UDF named `Treemap Visualizer`, as presented below:

+

+ +

+

+The UDF takes a CSV file as its input. For this example, we use a dataset of geo-location information of tweets. A sample of the dataset is shown below:

+

+

+

+

+The UDF takes a CSV file as its input. For this example, we use a dataset of geo-location information of tweets. A sample of the dataset is shown below:

+

+ +

+The `Treemap Visualizer` UDF takes the CSV file as a table (using the Table API) and outputs an HTML page that contains a treemap figure. The HTML page will be consumed by the HTML visualizer operator, and the `View Result` operator eventually displays the figure in the browser. The visualization is presented below:

+

+

+

+The `Treemap Visualizer` UDF takes the CSV file as a table (using the Table API) and outputs an HTML page that contains a treemap figure. The HTML page will be consumed by the HTML visualizer operator, and the `View Result` operator eventually displays the figure in the browser. The visualization is presented below:

+

+ +

+Now, let's take a closer look at the `Treemap Visualizer` UDF.

+As shown in the following code block, the UDF contains 3 steps:

+```python

+from pytexera import *

+

+import plotly.express as px

+import plotly.io

+import plotly

+import numpy as np

+

+

+class ProcessTableOperator(UDFTableOperator):

+

+ @overrides

+ def process_table(self, table: Table, port: int) -> Iterator[Optional[TableLike]]:

+ table = table.groupby(['geo_tag.countyName','geo_tag.stateName']).size().reset_index(name='counts')

+ #print(table)

+ fig = px.treemap(table, path=['geo_tag.stateName','geo_tag.countyName'], values='counts',

+ color='counts', hover_data=['geo_tag.countyName','geo_tag.stateName'],

+ color_continuous_scale='RdBu',

+ color_continuous_midpoint=np.average(table['counts'], weights=table['counts']))

+ fig.update_layout(margin=dict(t=50, l=25, r=25, b=25))

+ html = plotly.io.to_html(fig, include_plotlyjs='cdn', auto_play=False)

+ yield {'html': html}

+```

+

+1. It first performs an aggregation with a groupby to calculate the number of geo_tags of each US state.

+2. Then it invokes the Plotly library to create a treemap figure based on the aggregated dataset.

+3. Lastly, it converts the treemap figure object into an HTML string, by invoking the `to_html` function in the Plotly library, and yields it as the output.

+

+## **Convert the UDF into a Python Native Operator**

+

+Next we convert the `Treemap Visualizer` UDF into a native operator.

+As described in the[page for Java native operator](https://github.com/Texera/texera/wiki/Guide-to-Implement-a-Java-Native-Operator), a native operator requires the definitions of a descriptor (Desc), an executor (Exec), and a configuration (OpConfig). A Python native operator also requires these definitions, with some unique tweaks. We use the `Treemap Visualization` operator as an example to elaborate the differences:

+### Operator Descriptor (Desc)

+* Operator infomation

+

+Now, let's take a closer look at the `Treemap Visualizer` UDF.

+As shown in the following code block, the UDF contains 3 steps:

+```python

+from pytexera import *

+

+import plotly.express as px

+import plotly.io

+import plotly

+import numpy as np

+

+

+class ProcessTableOperator(UDFTableOperator):

+

+ @overrides

+ def process_table(self, table: Table, port: int) -> Iterator[Optional[TableLike]]:

+ table = table.groupby(['geo_tag.countyName','geo_tag.stateName']).size().reset_index(name='counts')

+ #print(table)

+ fig = px.treemap(table, path=['geo_tag.stateName','geo_tag.countyName'], values='counts',

+ color='counts', hover_data=['geo_tag.countyName','geo_tag.stateName'],

+ color_continuous_scale='RdBu',

+ color_continuous_midpoint=np.average(table['counts'], weights=table['counts']))

+ fig.update_layout(margin=dict(t=50, l=25, r=25, b=25))

+ html = plotly.io.to_html(fig, include_plotlyjs='cdn', auto_play=False)

+ yield {'html': html}

+```

+

+1. It first performs an aggregation with a groupby to calculate the number of geo_tags of each US state.

+2. Then it invokes the Plotly library to create a treemap figure based on the aggregated dataset.

+3. Lastly, it converts the treemap figure object into an HTML string, by invoking the `to_html` function in the Plotly library, and yields it as the output.

+

+## **Convert the UDF into a Python Native Operator**

+

+Next we convert the `Treemap Visualizer` UDF into a native operator.

+As described in the[page for Java native operator](https://github.com/Texera/texera/wiki/Guide-to-Implement-a-Java-Native-Operator), a native operator requires the definitions of a descriptor (Desc), an executor (Exec), and a configuration (OpConfig). A Python native operator also requires these definitions, with some unique tweaks. We use the `Treemap Visualization` operator as an example to elaborate the differences:

+### Operator Descriptor (Desc)

+* Operator infomation

+ The operator information is the same as a Java native operator, which contains the name, description, group, input port, and output port information.

+* Extending interface

+ Instead of implementing the `OperatorDescriptor` interface, a Python native operator implements the `PythonOperatorDescriptor` interface with overriding the `generatePythonCode` method. Our example is a `VisualizationOperator`, and we need to extend it as well.

+* Python content

+ The `generatePythonCode` method returns the actual Python code as a string, as shown below:

+

+

+

+ Now, let's compare the code in the PythonUDF with what we write in the descriptor. As we can see, both are responsible for generating the treemap figure and converting it into an HTML page. Additionally, we've included null-value handling and error alerts to make the operator more comprehensive.

+* Output schema

+ The Python UDF needs to define the output Schema in the property editor, while for native operators the output Schema is defined by implementing `getOutputSchema`. To do so, we use a Schema builder and add the output schema with the attribute name “html-content”.

+ ```python

+ override def getOutputSchema(schemas: Array[Schema]): Schema = {

+ Schema.newBuilder.add(new Attribute("html-content", AttributeType.STRING)).build

+ }

+ ```

+* Chart type

+ Since this operator is a visualization operator, we need to register its chart type as a `HTML_VIZ`.

+ ```python

+ override def chartType(): String = VisualizationConstants.HTML_VIZ

+ ```

+### Executor (Exec)

+In all Python native operators, the executor is simply the `PythonUDFExecutor`.

+### Operator Configuration

+In a Python native operator, it shares the same configuration as a Java native operator.

+### Registration

+It has the same process as a Java native operator.

+

+## **Test**

+

+After following all the steps above, you should be able to drag and drop the operator into the canvas. During the execution, the operator will output the expected result.

diff --git a/texera.wiki/Guide-to-Use-a-Python-UDF.md b/texera.wiki/Guide-to-Use-a-Python-UDF.md

new file mode 100644

index 00000000000..8252a94af36

--- /dev/null

+++ b/texera.wiki/Guide-to-Use-a-Python-UDF.md

@@ -0,0 +1,159 @@

+## What is Python UDF

+User-defined Functions (UDFs) provide a means to incorporate custom logic into Texera. Texera offers comprehensive Python UDF APIs, enabling users to accomplish various tasks. This guide will delve into the usage of UDFs, breaking down the process step by step.

+

+

+***

+

+

+## UDF UI and Editor

+

+

+The UDF operator offers the following interface, requiring the user to provide the following inputs: `Python code`, `worker count`, and `output schema`.

+

+

+ +

+

+

+-  Users can click on the "Edit code content" button to open the UDF code editor, where they can enter their custom Python code to define the desired operator.

+

+-

Users can click on the "Edit code content" button to open the UDF code editor, where they can enter their custom Python code to define the desired operator.

+

+-  Users have the flexibility to adjust the parallelism of the UDF operator by modifying the number of workers. The engine will then create the corresponding number of workers to execute the same operator in parallel.

+

+-

Users have the flexibility to adjust the parallelism of the UDF operator by modifying the number of workers. The engine will then create the corresponding number of workers to execute the same operator in parallel.

+

+-  Users need to provide the output schema of the UDF operator, which describes the output data's fields.

+ - The option `Retain input columns` allows users to include the input schema as the foundation for the output schema.

+ - The `Extra output column(s)` list allows users to define additional fields that should be included in the output schema.

+

+

Users need to provide the output schema of the UDF operator, which describes the output data's fields.

+ - The option `Retain input columns` allows users to include the input schema as the foundation for the output schema.

+ - The `Extra output column(s)` list allows users to define additional fields that should be included in the output schema.

+

+

+

+

+

+-  _Optionally_, users can click on the pencil icon located next to the operator name to make modifications to the name of the operator.

+

+

+***

+

+## Operator Definition

+

+### Iterator-based operator

+In Texera, all operators are implemented as iterators, including Python UDFs.

+Concepturally, a defined operator is executed as:

+

+```python

+operator = UDF() # initialize a UDF operator

+

+... # some other initialization logic

+

+# the main process loop

+while input_stream.has_more():

+ input_data = next_data()

+ output_iterator = operator.process(input_data)

+ for output_data in output_iterator:

+ send(output_data)

+

+... # some cleanup logic

+

+```

+

+### Operator Life Cycle

+The complete life cycle of a UDF operator consists of the following APIs:

+1. `open() -> None` Open a context of the operator. Usually it can be used for loading/initiating some resources, such as a file, a model, or an API client. It will be invoked once per operator.

+2. `process(data, port: int) -> Iterator[Optional[data]]` Process an input data from the given port, returning an iterator of optional data as output. It will be invoked once for every unit of data.

+3. `on_finish(port: int) -> Iterator[Optional[data]]` Callback when one input port is exhausted, returning an iterator of optional data as output. It will be invoked once per port.

+4. `close() -> None` Close the context of the operator. It will be invoked once per operator.

+

+

+### Process Data APIs

+There are three APIs to process the data in different units.

+

+1. Tuple API.

+

+```python

+

+class ProcessTupleOperator(UDFOperatorV2):

+

+ def process_tuple(self, tuple_: Tuple, port: int) -> Iterator[Optional[TupleLike]]:

+ yield tuple_

+

+```

+Tuple API takes one input tuple from a port at a time. It returns an iterator of optional `TupleLike` instances. A `TupleLike` is any data structure that supports key-value pairs, such as `pytexera.Tuple`, `dict`, `defaultdict`, `NamedTuple`, etc.

+

+Tuple API is useful for implementing functional operations which are applied to tuples one by one, such as map, reduce, and filter.

+

+2. Table API.

+```python

+

+class ProcessTableOperator(UDFTableOperator):

+

+ def process_table(self, table: Table, port: int) -> Iterator[Optional[TableLike]]:

+ yield table

+```

+Table API consumes a `Table` at a time, which consists of all the tuples from a port. It returns an iterator of optional `TableLike` instances. A `TableLike ` is a collection of `TupleLike`, and currently, we support `pytexera.Table` and `pandas.DataFrame` as a `TableLike` instance. More flexible types will be supported down the road.

+

+Table API is useful for implementing blocking operations that will consume all the data from one port, such as join, sort, and machine learning training.

+

+3. Batch API.

+```python

+

+class ProcessBatchOperator(UDFBatchOperator):

+

+ BATCH_SIZE = 10

+

+ def process_batch(self, batch: Batch, port: int) -> Iterator[Optional[BatchLike]]:

+ yield batch

+```

+Batch API consumes a batch of tuples at a time. Similar to `Table`, a `Batch` is also a collection of `Tuple`s; however, its size is defined by the `BATCH_SIZE`, and one port can have multiple batches. It returns an iterator of optional `BatchLike` instances. A `BatchLike ` is a collection of `TupleLike`, and currently, we support `pytexera.Batch` and `pandas.DataFrame` as a `BatchLike` instance. More flexible types will be supported down the road.

+

+The Batch API serves as a hybrid API combining the features of both the Tuple and Table APIs. It is particularly valuable for striking a balance between time and space considerations, offering a trade-off that optimizes efficiency.

+

+_All three APIs can return an empty iterator by `yield None`._

+

+### Schemas

+

+A UDF has an input Schema and an output Schema. The input schema is determined by the upstream operator's output schema and the engine will make sure the input data (tuple, table, or batch) matches the input schema. On the other hand, users are required to define the output schema of the UDF, and it is the user's responsibility to make sure the data output from the UDF matches the defined output schema.

+

+### Ports

+

+- Input ports:

+A UDF can take zero, one or multiple input ports, different ports can have different input schemas. Each port can take in multiple links, as long as they share the same schema.

+

+- Output ports:

+Currently, a UDF can only have exactly one output port. This means it cannot be used as a terminal operator (i.e., operator without output ports), or have more than one output port.

+

+#### 1-out UDF

+

+This UDF has zero input port and one output port. It is considered as a source operator (operator that produces data without an upstream). It has a special API:

+```python

+

+class GenerateOperator(UDFSourceOperator):

+

+ @overrides

+ def produce(self) -> Iterator[Union[TupleLike, TableLike, None]]:

+ yield

+```

+

+This `produce()` API returns an iterator of `TupleLike`, `TableLike`, or simply `None`.

+

+See [Generator Operator](https://github.com/Texera/texera/blob/master/core/amber/src/main/python/pytexera/udf/examples/generator_operator.py) for an example of 1-out UDF.

+

+

+#### 2-in UDF

+

+This UDF has two input ports, namely `model` port and `tuples` port. The `tuples` port depends on the `model` port, which means that during the execution, the `model` port will execute first, and the `tuples` port will start after the `model` port consumes all its input data.

+This dependency is particularly useful to implement machine learning inference operators, where a machine learning model is sent into the 2-in UDF through the `model` port, and becomes an operator state, then the tuples are coming in through the `tuples` port to be processed by the model.

+

+An example of 2-in UDF:

+```

+class SVMClassifier(UDFOperatorV2):

+

+

+ @overrides

+ def process_tuple(self, tuple_: Tuple, port: int) -> Iterator[Optional[TupleLike]]: